Never Stop Coding

AI Gateway for Multi-Provider LLMs

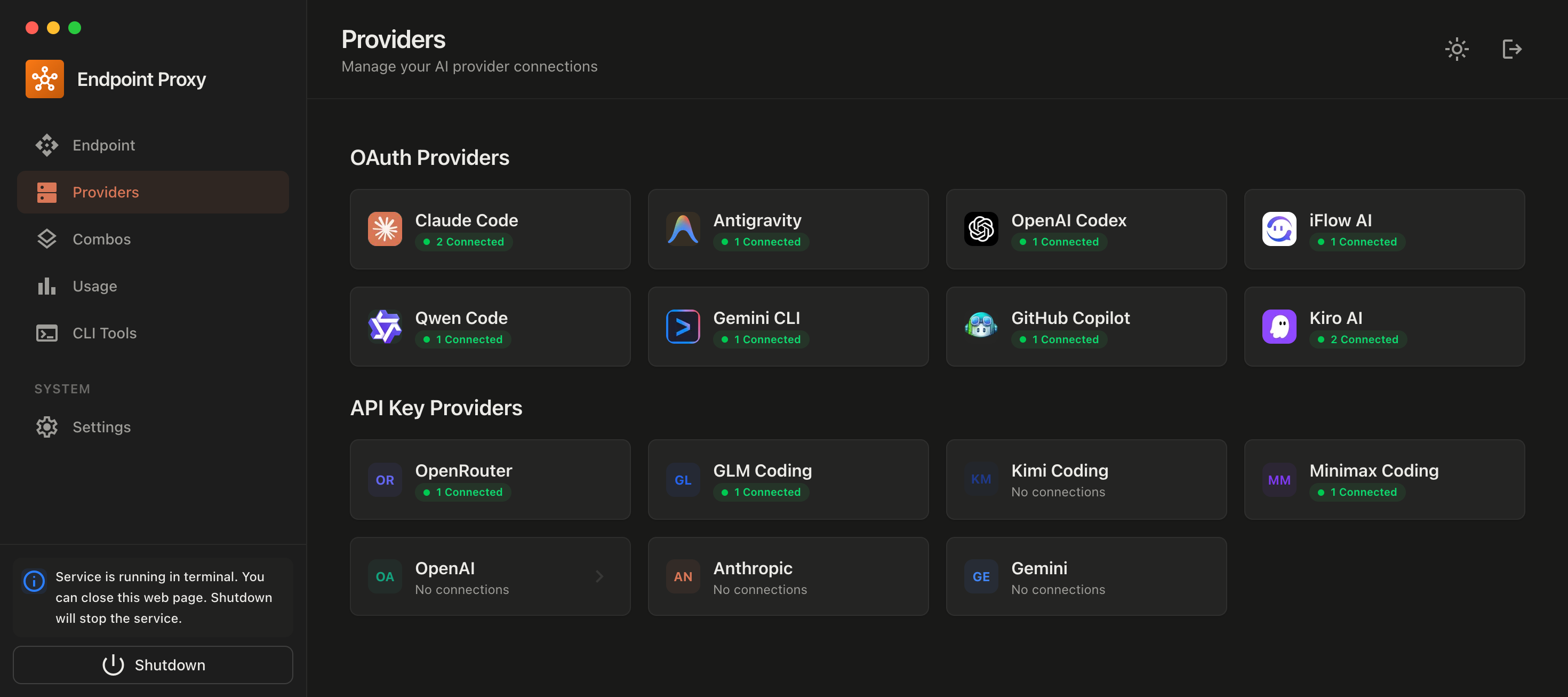

🤖 Free AI Provider for your favorite coding agents

Connect any AI-powered IDE or CLI tool through OmniRoute — free API gateway for unlimited coding.

📡 All agents connect via localhost:20128/v1 or cloud.omniroute.online/v1 — one config, unlimited models and quota

iFlow AI

iFlow AI

Qwen Code

Qwen Code

Kiro AI

Kiro AI

Gemini CLI

Gemini CLI

Claude Code

Claude Code

OpenAI

OpenAI

Anthropic

Anthropic

Google AI

Google AI

Antigravity

Antigravity

OpenClaw

OpenClaw

Groq

Groq

DeepSeek

DeepSeek

xAI (Grok)

xAI (Grok)

Mistral

Mistral

Together AI

Together AI

Fireworks

Fireworks

Perplexity

Perplexity

Cerebras

Cerebras

Cohere

Cohere

OpenRouter

OpenRouter

GLM (ZhipuAI)

GLM (ZhipuAI)

MiniMax

MiniMax

Moonshot

Moonshot

Nebius

Nebius

NVIDIA

NVIDIA

Sambanova

Sambanova

Novita AI

Novita AI

Chutes AI

Chutes AI

Kluster AI

Kluster AI

InfiniAI

InfiniAI

Targon

Targon

AI21 Labs

AI21 Labs

Lambda

Lambda

Lepton AI

Lepton AI

Deepgram

Deepgram

Alibaba DashScope

Alibaba DashScope

LongCat AI

LongCat AI

Pollinations

Pollinations

AI/ML API

AI/ML API

Kimi Coding

Kimi Coding

Alibaba Coding

Alibaba Coding

Ollama Cloud

Ollama Cloud

GitLab Duo

GitLab Duo

Cline (OAuth)

Cline (OAuth)

Blackbox Web

Blackbox Web

Brave Search

Brave Search

Exa Search

Exa Search

Tavily Search

Tavily Search

Serper

Serper