We’ve transitioned to a Sustaining Engineering model to better serve the customers who rely on us every day. Our mission is simple: to provide the most stable, secure, and reliable environment for your apps and data. We will continue releasing features and functionality that align with our Sustaining Engineering goals and provide a more robust and efficient platform to our customers.

Today we are excited to share three recent enhancements:

The post Heroku March 2026 Update appeared first on Heroku.

]]>We’ve transitioned to a Sustaining Engineering model to better serve the customers who rely on us every day. Our mission is simple: to provide the most stable, secure, and reliable environment for your apps and data. We will continue releasing features and functionality that align with our Sustaining Engineering goals and provide a more robust and efficient platform to our customers.

Today we are excited to share three recent enhancements:

- 1GB slug limits: We’ve doubled the default compressed slug size from 500MB to 1GB to support complex libraries and AI/ML frameworks.

- Longer build timeouts: More flexibility for complex builds that need extra time to compile.

- Heroku CLI v11: A complete architectural move to ESM and oclif v4, delivering faster execution and better maintainability.

You can follow the Heroku Changelog to keep track of the work we do to keep your apps reliable and secure.

The post Heroku March 2026 Update appeared first on Heroku.

]]>Heroku CLI v11 is now available. This release represents the most significant architectural overhaul in years, completing our migration to ECMAScript Modules (ESM) and oclif v4. This modernization brings faster performance, a new semantic color system, and aligns the CLI with modern JavaScript standards. While v11 introduces breaking changes to legacy namespaces, the benefits are […]

The post Modernizing the Command Line: Heroku CLI v11 appeared first on Heroku.

]]>Heroku CLI v11 is now available. This release represents the most significant architectural overhaul in years, completing our migration to ECMAScript Modules (ESM) and oclif v4. This modernization brings faster performance, a new semantic color system, and aligns the CLI with modern JavaScript standards.

While v11 introduces breaking changes to legacy namespaces, the benefits are substantial: better performance, improved maintainability, and enhanced usability that simplifies how you manage Heroku resources from the command line.

Modern architecture built for performance and usability

Faster execution via ECMAScript Modules (ESM)

The transition to a full ESM-first architecture is the core of v11. By converting every command, library, and test from CommonJS to ESM, we’ve unlocked significant performance gains:

- Superior tree-shaking: Reduced bundle sizes lead to a leaner installation and faster updates.

- Faster command execution: Optimized module loading streamlines the internal execution path.

- Modern ecosystem alignment: The CLI now mirrors the standards of modern JavaScript, simplifying long-term maintenance.

Streamlined performance with Open CLI Framework (oclif) v4

We’ve jumped two major versions to oclif v4, bringing the CLI in line with the latest standards of the Open CLI Framework. This transition delivers:

- Faster command loading: Improved manifest caching ensures the CLI doesn’t have to parse every command for every interaction, making the experience significantly faster.

- Seamless interoperability: Version 11 ensures smooth compatibility between CommonJS and ESM plugins, allowing for a more flexible developer experience.

- Modular code organization: The upgrade to oclif v4 enables granular imports, leading to a leaner and more maintainable codebase.

To support these changes, we’ve also simplified our build system—migrating from Yarn to npm and removing the monorepo structure in favor of a single, more maintainable package.

Enhanced visual experience

Usability in v11 extends to how you interact with and interpret terminal data. The CLI’s visual output is now more intuitive, customizable, and accessible:

- Consistent semantic colors: Common Heroku resources, success, warning, and error messages now follow a unified color palette, making it easier to identify critical information at a glance.

- Modern theme support: With Version 11, the Heroku CLI is now themeable, allowing for a more modern and readable visual experience. You can also manually opt for a basic ANSI color scheme by setting the

HEROKU_THEME=simpleenv var. - Global accessibility: Version 11 supports disabling colors entirely by setting the

NO_COLOR=trueenv var for users with visual impairments or those who prefer plain-text logging.

Node.js 22 support

The Heroku CLI v11 ships with Node.js 22 while maintaining Node.js 20 compatibility, providing several key benefits:

- Modern runtime access: Users can now leverage the latest JavaScript features and performance improvements provided by the Node.js 22 runtime.

- Latest standard alignment: The update ensures the CLI remains current with the evolving JavaScript ecosystem.

- Performance-first foundation: Supporting the latest LTS version of Node.js is essential for delivering the performance gains of our new ESM-first architecture.

New commands for modern Heroku workflows

We have also focused on the day-to-day developer experience. These updates refine how you interact with your Heroku resources and make it easier to discover the tools you need:

- Unified data maintenance: The

heroku data:maintenance:*commands are now built into the core CLI. Note that legacyheroku pg:maintenanceandheroku redis:maintenancecommands have been deprecated. - Fast command discovery: Can’t remember a command name?

heroku searchhelps you find it fast. - Global prompt support: The

--promptflag is now available globally and appears in help text for all commands that support it. - Streamlined REPL invocation: Access to REPL is now accessible via

heroku repland included in our CLI documentation and help text. Try it out!

Transitioning to Heroku CLI v11

To support this new architecture, v11 includes a few updates to how certain commands and outputs behave. While these represent a shift from legacy versions, they are designed to make your workflow cleaner and more consistent:

- Output formatting: CLI output format has changed in v11, with the most visible changes in table formatting.

- AI plugin: To keep the core installation lean and fast, the

heroku-cli-plugin-aiis now an optional installation rather than being bundled by default. - Unified maintenance commands: We’ve simplified data management by moving maintenance tasks into the core

data:maintenance:*namespace. This replaces the legacypg:maintenanceandredis:maintenancecommands with a single, intuitive workflow.

A modernized CLI for a modern ecosystem

Heroku CLI v11 is a complete technical modernization designed to grow with the JavaScript ecosystem, and represents a major investment in the CLI’s future. By modernizing our architecture with ESM and oclif v4, we’ve built a faster, more maintainable foundation that will enable us to ship features more quickly while improving the developer experience.

Upgrade today or visit the installation guide. For a full list of updates, check out the CLI changelog. As always, we welcome your feedback as we continue to improve the developer experience.

The post Modernizing the Command Line: Heroku CLI v11 appeared first on Heroku.

]]>Modern applications, especially those leveraging AI and data-heavy libraries, need more room to breathe. To support these evolving stacks and reduce developer friction, we’ve increased the default maximum compressed slug size from 500MB to 1GB. Understanding app slugs and deployment App slugs are the container build artifacts produced by Heroku Buildpacks and run in dynos. […]

The post Bigger Slugs and Greater Build Timeout Flexibility appeared first on Heroku.

]]>Modern applications, especially those leveraging AI and data-heavy libraries, need more room to breathe. To support these evolving stacks and reduce developer friction, we’ve increased the default maximum compressed slug size from 500MB to 1GB.

Understanding app slugs and deployment

App slugs are the container build artifacts produced by Heroku Buildpacks and run in dynos. Allowing larger slugs makes it easier to deploy apps with large library or package dependencies on Heroku. Many AI and machine learning libraries fit this pattern and we’re looking forward to seeing what new types of apps will be possible with the higher limit.

How this can affect your dyno boot times

While the new 1GB limit provides more headroom, there is a direct correlation between slug size and dyno boot times. Larger slugs are slower to download and extract and starting large apps takes longer, which can slow down common tasks like scaling and heroku run commands. We still recommend that you try to keep slugs small and nimble to ensure optimal performance.

Increased build compile timeouts for complex apps

We’re also increasing the build compile timeouts as part of this change. Build timeout is another limitation commonly hit for complex Heroku apps with many dependencies. Heroku already has a lot of flexibility to allow occasional long-running builds when build caches are cleared, and today’s update increases these timeout limits across the board.

Removing deployment friction for developers

Slug size limits and build timeouts weren’t just minor inconveniences, they were recurring points of friction that disrupted developer flow. By easing these constraints, we’re ensuring that your deployment pipeline stays out of your way, allowing you to focus on building complex applications.

The post Bigger Slugs and Greater Build Timeout Flexibility appeared first on Heroku.

]]>Most developers never see the 11 pack releases we shipped in the last 14 months as pack CLI maintainers. That’s actually a good sign—it means the infrastructure just works. When a critical vulnerability emerges requiring an immediate upgrade, the fix is shipped within days. Here’s what most developers don’t see: that same security patch now […]

The post Behind the Scenes: How Maintaining Cloud Native Buildpacks Powers Platforms Like Heroku appeared first on Heroku.

]]>Most developers never see the 11 pack releases we shipped in the last 14 months as pack CLI maintainers. That’s actually a good sign—it means the infrastructure just works. When a critical vulnerability emerges requiring an immediate upgrade, the fix is shipped within days.

Here’s what most developers don’t see: that same security patch now protects every buildpack user across Heroku, Google Cloud, Openshift, VMware Tanzu, and thousands of internal platforms.

The daily work of platform maintenance

As maintainers of pack CLI, the entry point to Cloud Native Buildpacks (CNBs), our work lives in that invisible layer between developers and infrastructure. The routine looks like this: daily Slack monitoring, triaging GitHub issues, reviewing community pull requests, and participating in weekly Cloud Native Community Foundation (CNCF) working group meetings.

In the last 14 months, we’ve shipped 27 releases across CNB projects (11 for pack, 16 for buildpacks/github-actions), reviewed 65+ community PRs, and implemented two major features: Execution Environments and System Buildpacks. That’s roughly one release every 5-6 weeks, each bringing bug fixes, security patches, or new capabilities.

The work includes unglamorous but critical tasks: migrating Windows CI from Equinix LCOW to GitHub-hosted runners, upgrading from docker/docker to moby/moby client, and shipping FreeBSD binaries for broader platform support. When multi-arch builds needed the --append-image-name-suffix flag or when the Platform API 0.14 lifecycle restorer had issues, those fixes went out to everyone.

There are no flashy demos; instead this is invisible infrastructure work that proves its worth when an emergency security patch ships or a build completes in 30 seconds instead of failing after 5 minutes.

How upstream open source creates downstream value

Here’s where it gets interesting: Heroku has funded our maintainer work, but the benefits ripple across the entire cloud-native ecosystem. This bidirectional value is what makes open source infrastructure so powerful.

Take System Buildpacks, a feature we recently implemented:

- For Heroku, it means they can curate platform defaults while giving users flexibility with third-party buildpacks.

- For Google Cloud Run, it solves its buildpack composition problem.

- For internal platform teams, it eliminates the manual Procfile buildpack overhead that’s been a pain point for years.

That one newly implemented feature created universal benefit.

Or consider Execution Environments, another major feature we shipped this year. This enables Heroku to support test environments for CI products while also helping any platform operator who needs consistent build configurations across development, CI, and production. The code lives upstream in the CNCF project, battle-tested by multiple companies, and maintained collaboratively.

The CNCF governance model ensures no single company can control the direction. Companies like Heroku, Google, and VMware collaborate on infrastructure so they can compete on developer experience. When we fix a multi-arch publishing bug or add FreeBSD support, everyone benefits immediately.

Real innovation happens in production

The features we’re shipping aren’t theoretical, they solve real problems in production:

- Build Observability (RFC 0131): Platform operators need metrics and tracing to optimize costs and troubleshoot failures. Coming in Q3 2026, this will provide comprehensive visibility into build performance across the ecosystem.

- OCI Image Annotations (RFC 0130): Enterprise compliance requires metadata tracking. We’re making it zero-config so platforms can meet SOC2, FedRAMP, and other regulatory requirements without manual overhead.

- Removing Kaniko dependency: Supply chain security isn’t just buzzwords, it’s actively removing unmaintained dependencies from critical infrastructure to reduce risk.

Each feature starts with a production requirement, gets refined through community discussion, and ships as a standard that everyone can rely on.

Why this model works

At KubeCon EU 2025, the biggest question during our presentation “Buildpacks: Pragmatic Solutions to Quick and Secure Image Builds” wasn’t about technical implementation—it was about sustainability. How do we keep critical open source infrastructure maintained?

Companies like Heroku invest in dedicated maintainer teams, and get battle-tested technology that serves their needs while contributing to the commons. The ecosystem gets features driven by real production requirements, not academic speculation, and developers get reliable infrastructure that just works.

That’s the true impact of open source maintainership: 65+ PRs reviewed, 27 releases shipped, 2 major features delivered, and the countless invisible fixes that keep platforms running smoothly.

Join the conversation at KubeCon EU 2026

The evolution of Cloud Native Buildpacks continues, and there is no better place to see where we’re headed next than at KubeCon EU 2026.

If you want to dive deeper into the future of the project, don’t miss the upcoming session:

Buildpacks: Towards 1.0, AI, and Other Things

Thursday March 26, 2026 14:30 – 15:00 CET

Aidan Delaney, Bloomberg

This talk covers the road to 1.0, focusing on the stability and technical components (Lifecycle, Platform, and Builder) necessary for a production-ready foundation. Aidan will also showcase how the ecosystem is integrating AI and Machine Learning to simplify the deployment of AI-driven applications.

Visit the Buildpacks Project booth

The community will be out in full force! Stop by the CNB booth, P-3B in the Project Pavilion (map), to meet the maintainers, ask questions, and learn more about the “invisible” infrastructure powering your code. We’d love to see you there!

The post Behind the Scenes: How Maintaining Cloud Native Buildpacks Powers Platforms Like Heroku appeared first on Heroku.

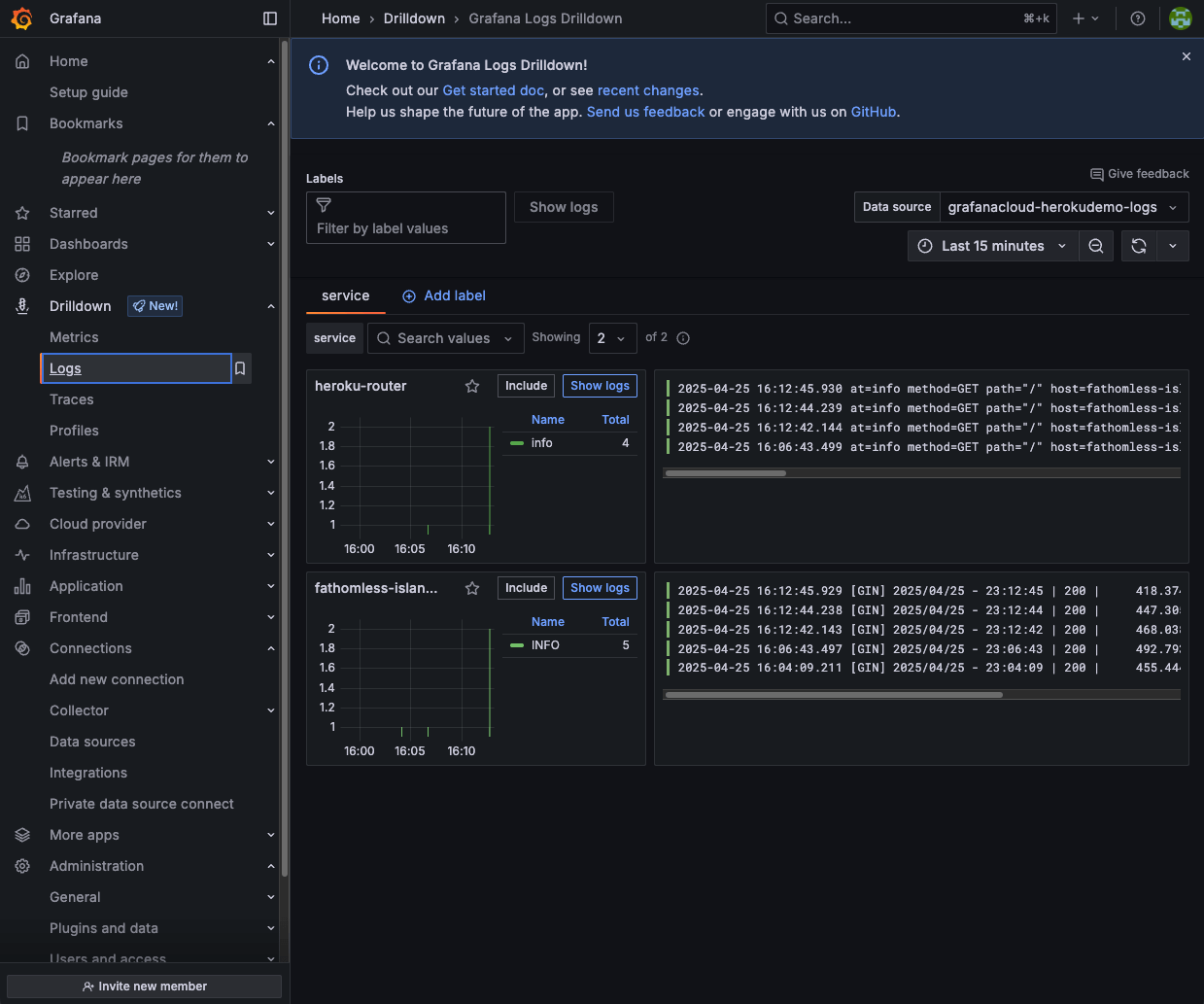

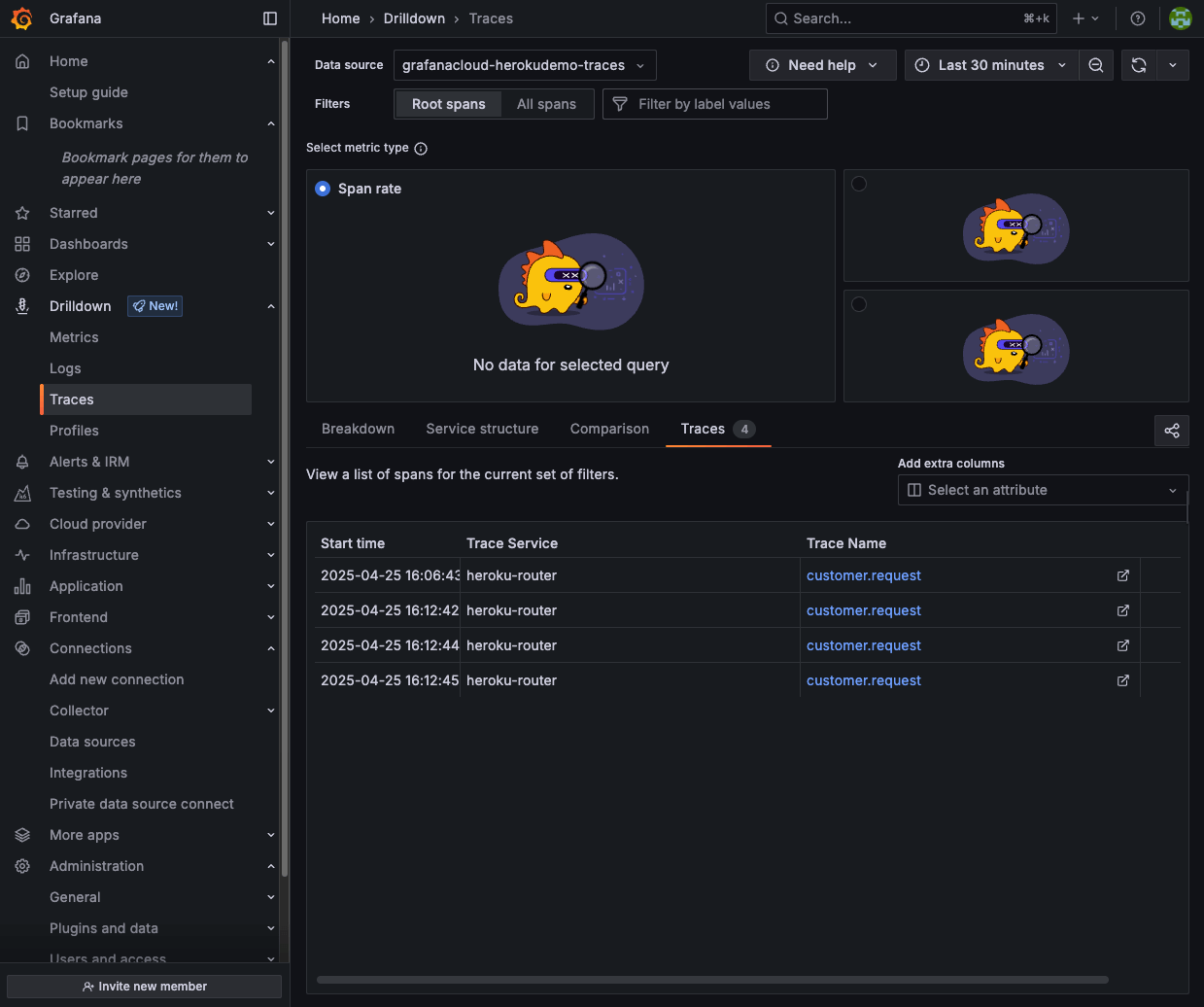

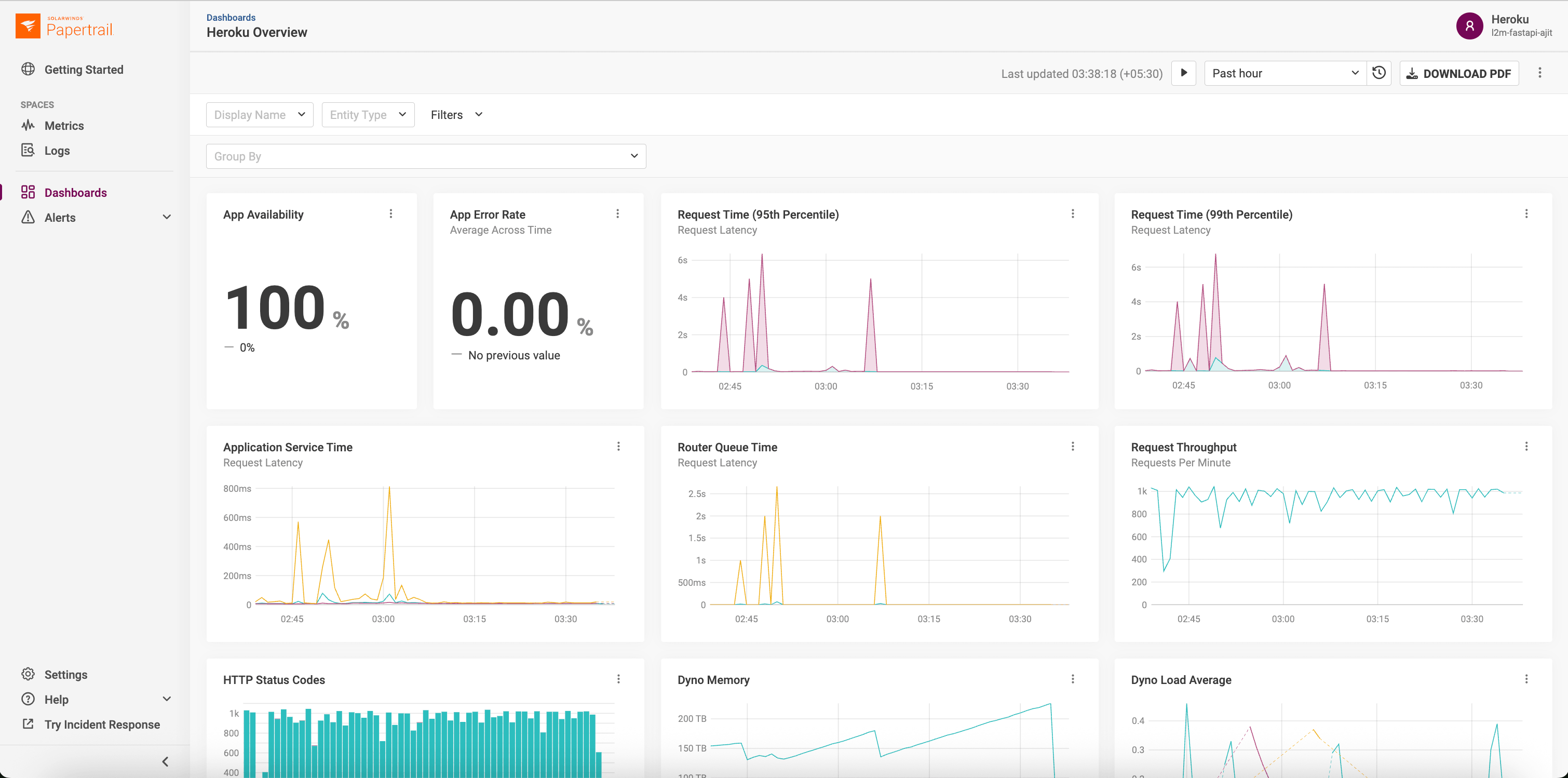

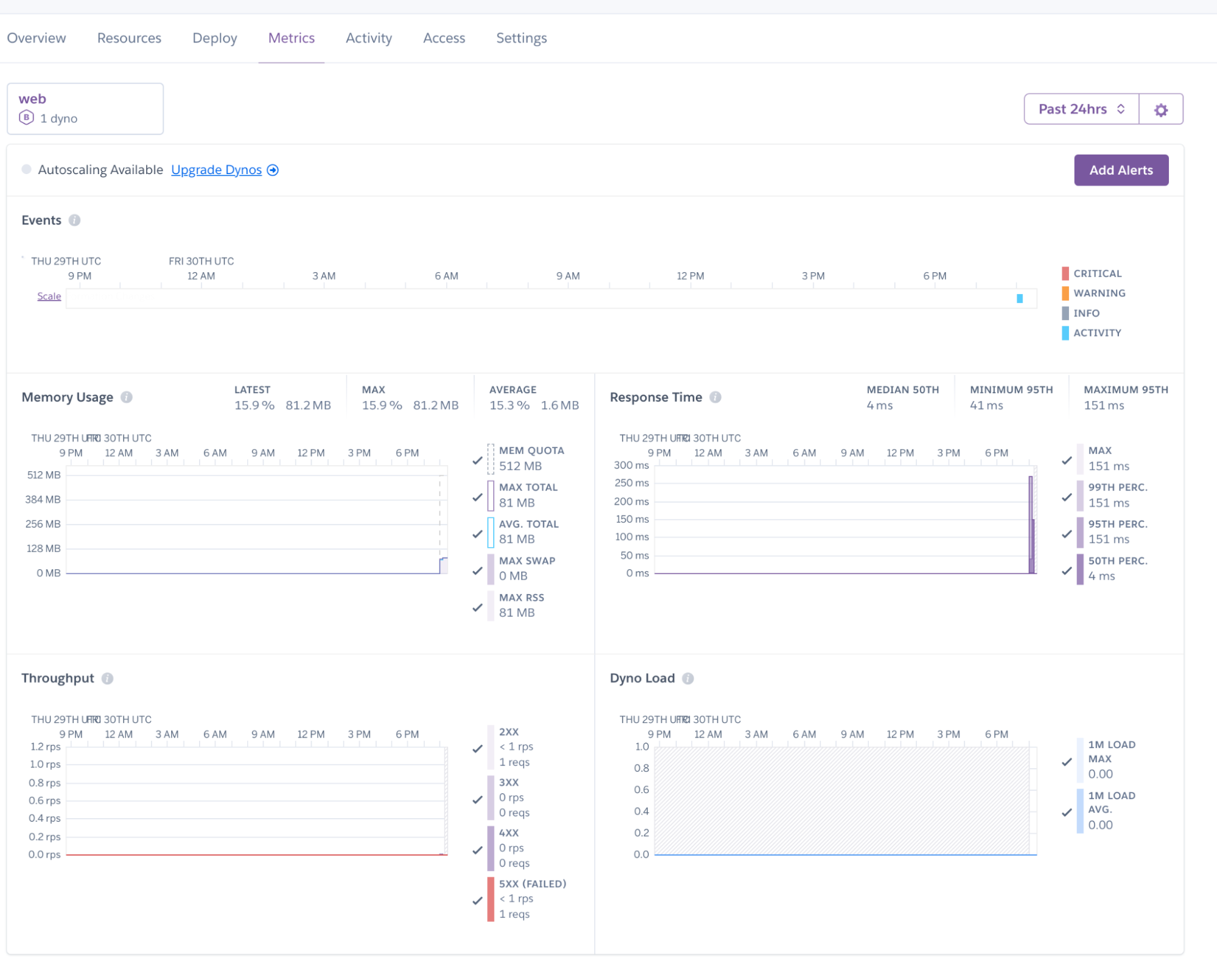

]]>Modern applications on Heroku don’t just consist of code. They are living ecosystems comprised of dynos, databases, third-party APIs, and complex user interactions. As these systems scale, so do the logs and metrics. To efficiently extract the signals from the noise you need to understand system health in the context of external factors, like resource […]

The post From Fragmented Logs to Full-Stack Visibility with SolarWinds Papertrail appeared first on Heroku.

]]>Modern applications on Heroku don’t just consist of code. They are living ecosystems comprised of dynos, databases, third-party APIs, and complex user interactions. As these systems scale, so do the logs and metrics. To efficiently extract the signals from the noise you need to understand system health in the context of external factors, like resource limits . While Heroku removes the pain of managing servers, observability is critical for monitoring service interactions and performance optimization.

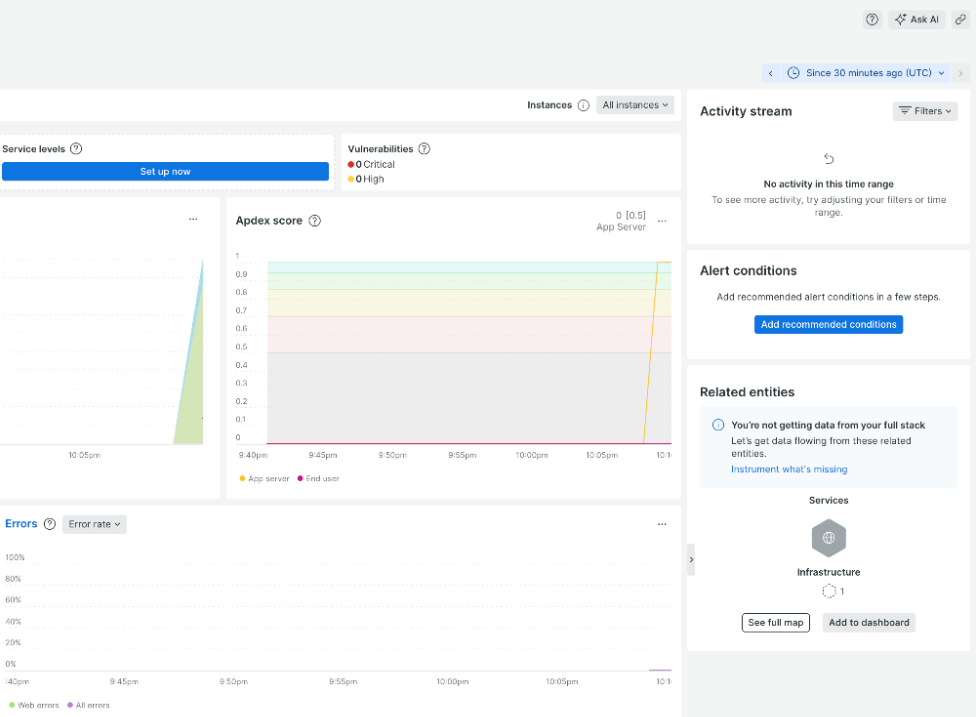

Maintaining peak performance and operational health demands sophisticated logging and monitoring capabilities. However, a common friction point remains: the “swivel-chair” workflow. The necessity of frequent toggling between application source code, deployment activity logs, and disparate monitoring dashboards creates significant cognitive load. When you are diagnosing a critical production error, every second spent correlating a timestamp from a log file to a spike on a separate metrics dashboard is a second lost.

To resolve this fragmentation, SolarWinds Papertrail powered by SolarWinds and Heroku have expanded the SolarWinds Papertrail add-on. By delivering logs and metrics into a single, unified solution, we are helping developers streamline troubleshooting and dedicate more time to writing high-quality code.

The high cost of context switching: Where traditional monitoring fails

Before we dive into the solution, it is worth dissecting the problem. In a traditional Heroku setup, a developer might rely on heroku logs –-tail for real-time events, a separate add-on for performance graphs, and perhaps a third tool for uptime alerting.

This fragmentation results in several operational inefficiencies:

- Correlation blindness: If your application throws a 500 error, was it caused by a memory leak, a database lock, or a bad deployment? Finding the answer requires manually matching the timestamp of the error log with the timestamp of the CPU spike on a different screen.

- Alert fatigue: When metrics and logs are siloed, alerts lack context. A “High CPU” alert is useless without the accompanying log lines that tell you what process was consuming that CPU.

- Tool sprawl: Managing multiple subscriptions, API keys, and dashboards increases administrative overhead and costs.

The cognitive load required to diagnose a production error is often higher than the complexity of the fix itself. This is where the unified SolarWinds Papertrail add-on changes the game.

The solution: One interface for logs and metrics

To simplify and consolidate your application’s observability stack, SolarWinds Papertrail has been extended to replace the functionality previously delivered by separate add-ons. The enhanced SolarWinds Papertrail add-on combines real-time log management, metrics dashboards, and alerting into a single, unified offering.

This consolidation provides a single pane of glass for all aspects of your application’s health. By bringing these capabilities under one roof, we eliminate the high cost of context switching.

Core components of the unified architecture

SolarWinds Papertail’s unified architecture isn’t just about moving data; it’s about transforming raw logs and metrics into actionable insights. By layering different types of operational data, we tell a complete story and eliminate the traditional barriers to speed.

Eliminate data lag with real-time unified ingestion

SolarWinds Papertrail’s signature feature has always been frustration-free log management. It allows you to tail and search logs as they happen. Real-time means real-time. There is no waiting for batches to process or indexes to update.

- Live tail: Watch events stream live, identical to the CLI experience but with powerful filtering and highlighting.

- Contextual search: Access your logs via a web browser, command-line interface (CLI), or API with the same effortless search format.

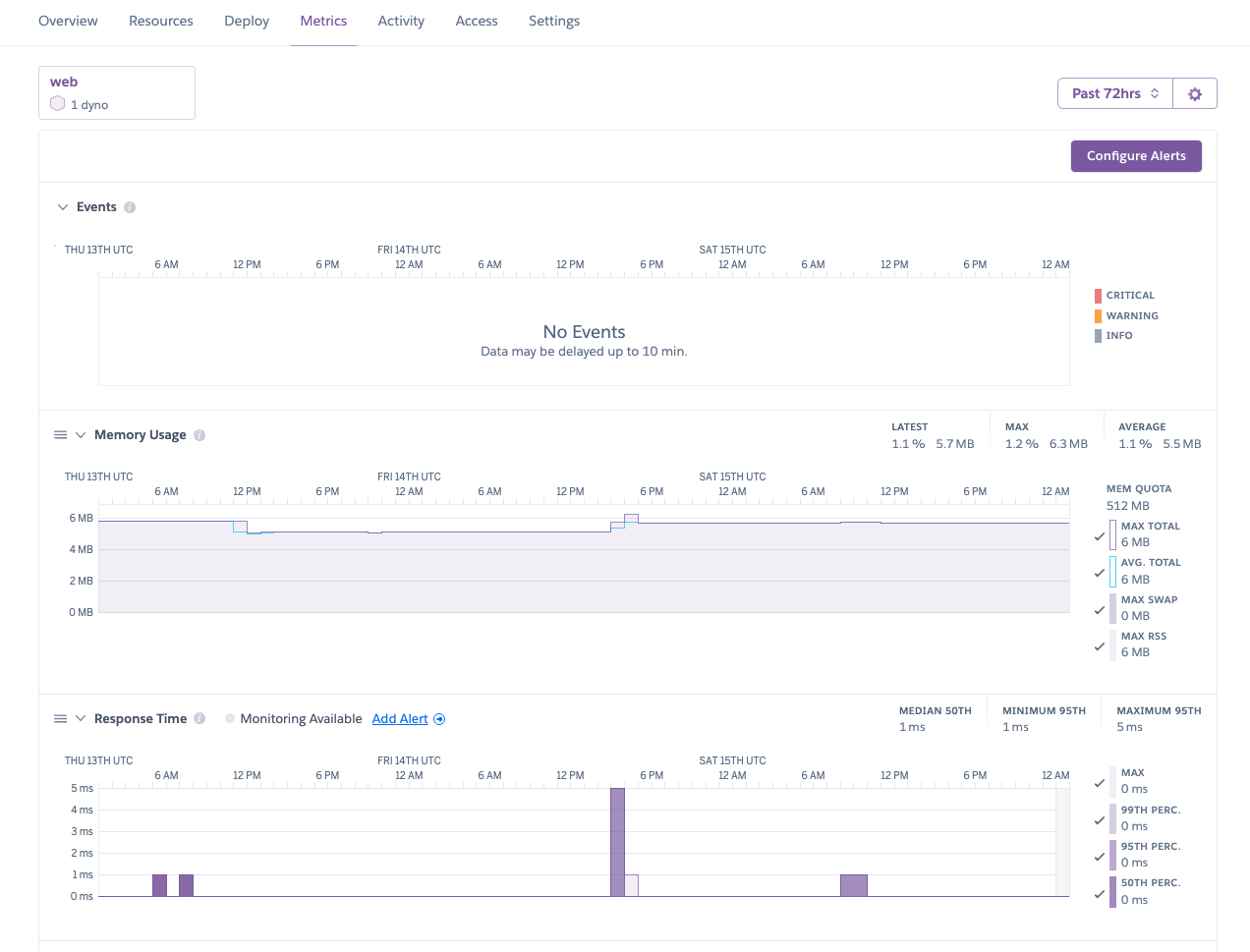

Gain instant visibility through native Heroku metrics

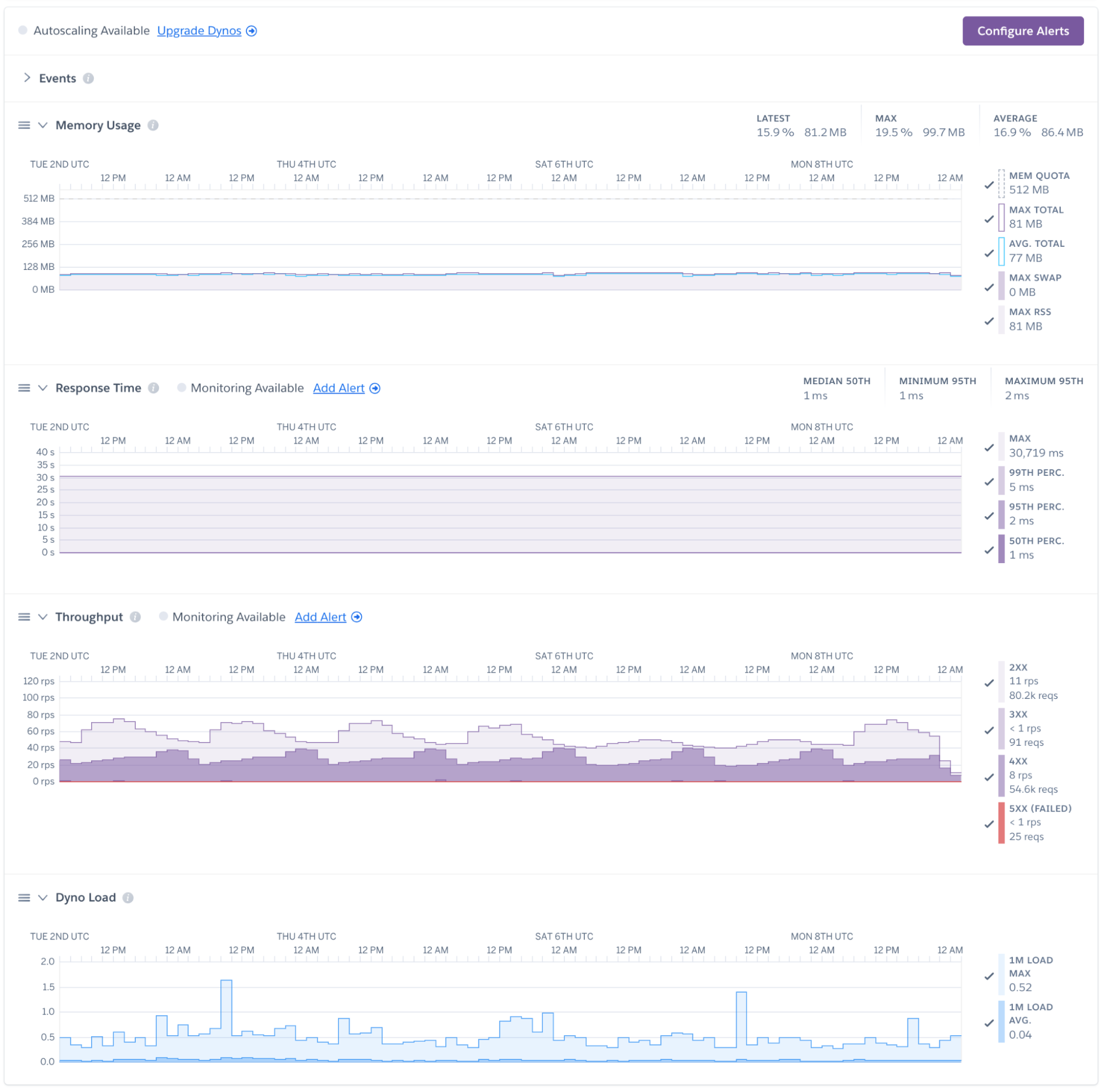

Instead of requiring a separate agent or complex configuration, SolarWinds Papertrail leverages Heroku’s native capabilities. It automatically ingests high-volume log data and structures it into actionable metrics.

- Dyno performance: Visualize CPU load, memory usage, and load averages per dyno.

- Application health: Track throughput, response times, and error rates.

- Data services: Monitor critical database performance including active and waiting connections, database size, cache hit rates, and IOPS to prevent connection saturation and storage bottlenecks.

When you view these metrics alongside your logs, the shape of your traffic becomes visible. A sudden dip in log volume might indicate a silent failure where requests aren’t reaching the server, while a massive spike could signal a DDoS attack or a runaway loop.

Cut through the noise with context-aware alerting

Alert fatigue is a real threat to operational excellence. If everything is an emergency, nothing is. The expanded SolarWinds Papertrail toolset moves beyond basic error counting to intelligent alerting.

You can now set granular, custom thresholds using minimum, maximum, average, or summary values on any metric. This allows you to filter out transient noise and focus on statistically significant deviations.

When a true issue is detected, the system integrates seamlessly with the tools you already use, pushing actionable notifications to Slack, PagerDuty, Microsoft Teams, and more.

Scale your team’s expertise with institutional memory

One of the most underrated challenges in growing development teams is the loss of troubleshooting context during handoffs or scaling. SolarWinds Papertrail addresses this by treating every saved search and custom alert as institutional memory.

Because all Heroku collaborators can contribute to a shared library of diagnostic tools, the platform accumulates your team’s collective expertise. A complex search query written to diagnose a specific race condition today doesn’t vanish into a terminal history; it becomes a reusable diagnostic tool for a junior developer tomorrow.

The 2:00 AM test: From fragmented logs to near-instant resolution

To understand the practical impact, let’s look at a common scenario. The incident: It is 2:00 AM. Your PagerDuty triggers an alert: “API Response Time High.”

The old way

You wake up, log into your metrics dashboard, and see a spike in response time starting at 1:55 AM. You then open your logging provider and try to search for logs from that timeframe. You are scrolling, trying to mentally overlay the graphs with the text. You see some database errors but aren’t sure if they are the cause or the symptom.

The SolarWinds Papertrail way

You click the link in the PagerDuty alert. It takes you directly to the SolarWinds Papertrail dashboard, focused on the 1:55 AM timeframe.

- Top view: You see the “Response Time” graph spiking.

- Bottom view: Directly underneath, you see the log stream for that exact moment.

- Diagnosis: You notice a specific background worker (

Dyno worker.1) outputting “Out of Memory” errors (R14) right as the latency spiked. - Resolution: You identify that a specific batch job was consuming too much RAM. You restart the dyno and push a fix to optimize the job.

Total time? Minutes, not hours. The correlation was instant because the data was unified.

Migration: Simplifying the stack

Other SolarWinds add-ons, Librato and AppOptics were deprecated at the end of January 2026. For teams previously relying on separate add-ons like Librato or AppOptics, the path forward is now significantly streamlined. Managing distinct subscriptions and dashboards for logs versus metrics is a relic of the past now that we’ve brought metrics into SolarWinds Papertrail.

Accelerate development and minimize headaches with SolarWinds Papertrail

Modern development isn’t just about shipping code. It’s about owning the lifecycle of that code in production. The SolarWinds Papertrail add-on for Heroku offers a path away from fragmented, frustration-filled troubleshooting toward a streamlined, full-stack view of your application’s health.

By consolidating logs, metrics, and alerting into a single, frustration-free interface, you regain the focus required to build what’s next.

Ready to streamline your workflow? Find SolarWinds Papertrail in the Heroku Elements Marketplace today.

The post From Fragmented Logs to Full-Stack Visibility with SolarWinds Papertrail appeared first on Heroku.

]]>The web browser and certificate authority industry is shortening the maximum allowed lifetime of TLS certificates. These changes will improve security on the Web, but you may have to change certificate maintenance practices for apps you run on Heroku. The good news is that if you’re using Heroku Automated Certificate Management, no changes are required: […]

The post Preparing for Shorter SSL/TLS Certificate Lifetimes appeared first on Heroku.

]]>The web browser and certificate authority industry is shortening the maximum allowed lifetime of TLS certificates. These changes will improve security on the Web, but you may have to change certificate maintenance practices for apps you run on Heroku.

The good news is that if you’re using Heroku Automated Certificate Management, no changes are required: Heroku already refreshes and updates certificates on your apps according to the new policies.

If you maintain and upload certificates for your Heroku applications yourself, here is what the changes will mean for you.

Industry shift towards shorter certificate lifetimes

The CA/Browser Forum is phasing in shorter maximum lifetimes for all publicly trusted SSL/TLS certificates. While the final goal is a 47-day limit by 2029, the first major milestone is approaching quickly.

Starting March 15, 2026, the maximum validity period for publicly trusted SSL/TLS certificates will be reduced to 200 days.

| Effective Date | Maximum Certificate Lifespan |

|---|---|

| Current | 398 days |

| March 15, 2026 | 200 days |

| March 15, 2027 | 100 days |

| March 15, 2029 | 47 days |

Why this is happening

Shorter certificate lifespans improve security by:

- Reduced exposure: Shrinks the window of exposure if a private key is compromised

- Modern standards: Ensures certificates rotate frequently to adopt the latest cryptographic standards

- Automation: Encourages a shift toward automated certificate management

Recommended actions for manual certificate users

If you use custom SSL certificates on Heroku (certificates you obtain and upload yourself), you will need to:

- Plan for more frequent renewals: After March 15, 2026, you’ll need to renew certificates at least every 200 days (approximately every 6.5 months) rather than annually.

- Update your renewal processes: Ensure your team or certificate management tools can handle the increased renewal frequency.

- Check your current certificates: Review the expiration dates of your existing certificates.Note: Certificates issued before March 15, 2026 with longer validity periods will remain valid until they expire, but renewals after that date must comply with the new 200-day maximum.

Automating certificate renewals with Heroku ACM

Consider switching to Heroku Automated Certificate Management (ACM). ACM automatically provisions and renews certificates for your custom domains at no additional cost, eliminating the need for manual certificate management.

To enable ACM for your app:

heroku certs:auto:enable -a your-app-name

Learn more: Heroku ACM Documentation

Have questions about certificate management?

If you have questions about these changes or need assistance with your certificate strategy, please contact Heroku Support or visit our documentation:

We’re committed to helping you navigate these industry changes smoothly.

The post Preparing for Shorter SSL/TLS Certificate Lifetimes appeared first on Heroku.

]]>Heroku is introducing significant updates to Managed Inference and Agents. These changes focus on reducing developer friction, expanding model catalogue, and streamlining deployment workflows. More flexibility with the new standard plan Until now, Heroku’s model-based plans required developers to provision a specific add-on for a specific model. This created significant operational overhead. If you wanted […]

The post Whats New in Heroku AI: New Models and a Flexible Standard Plan appeared first on Heroku.

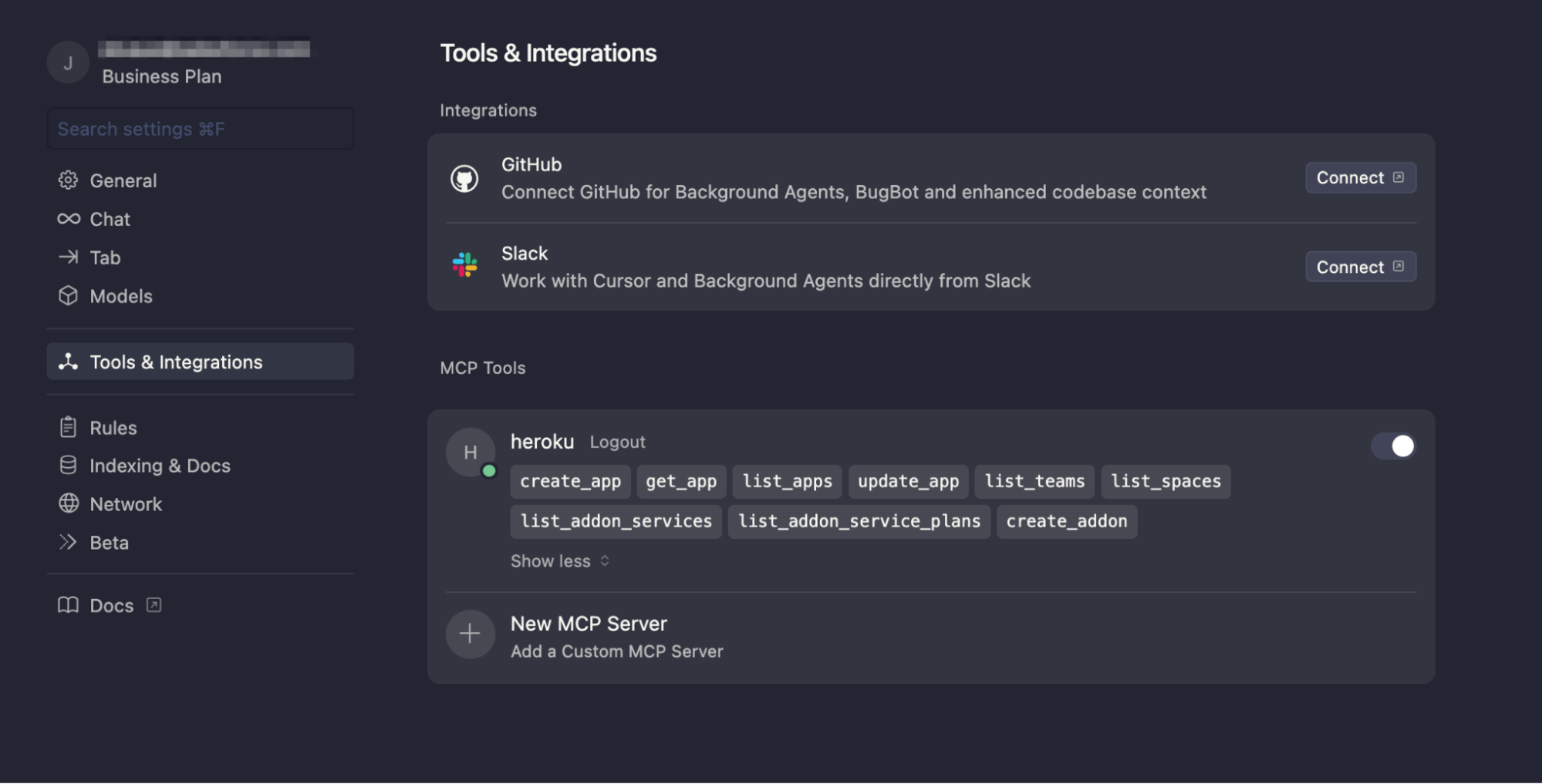

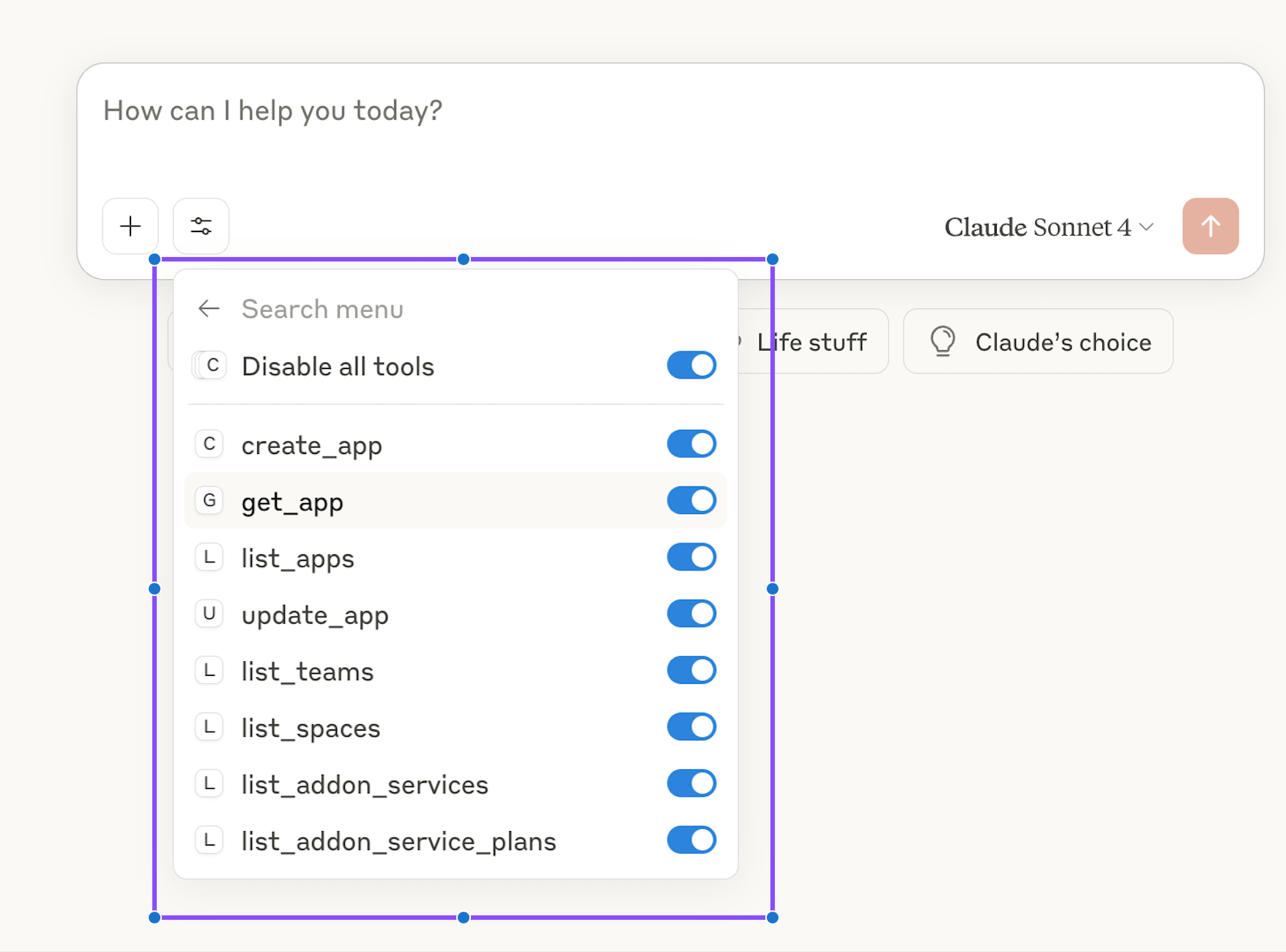

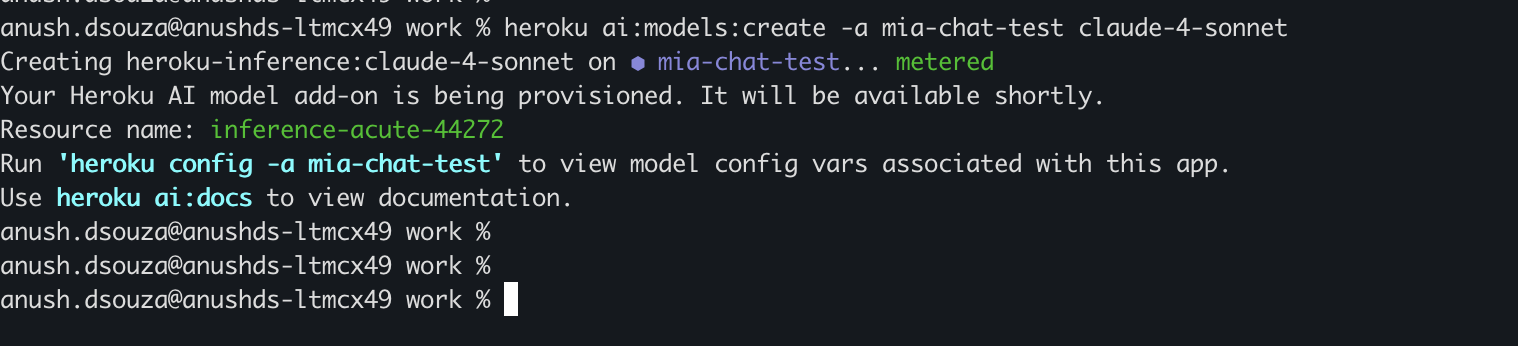

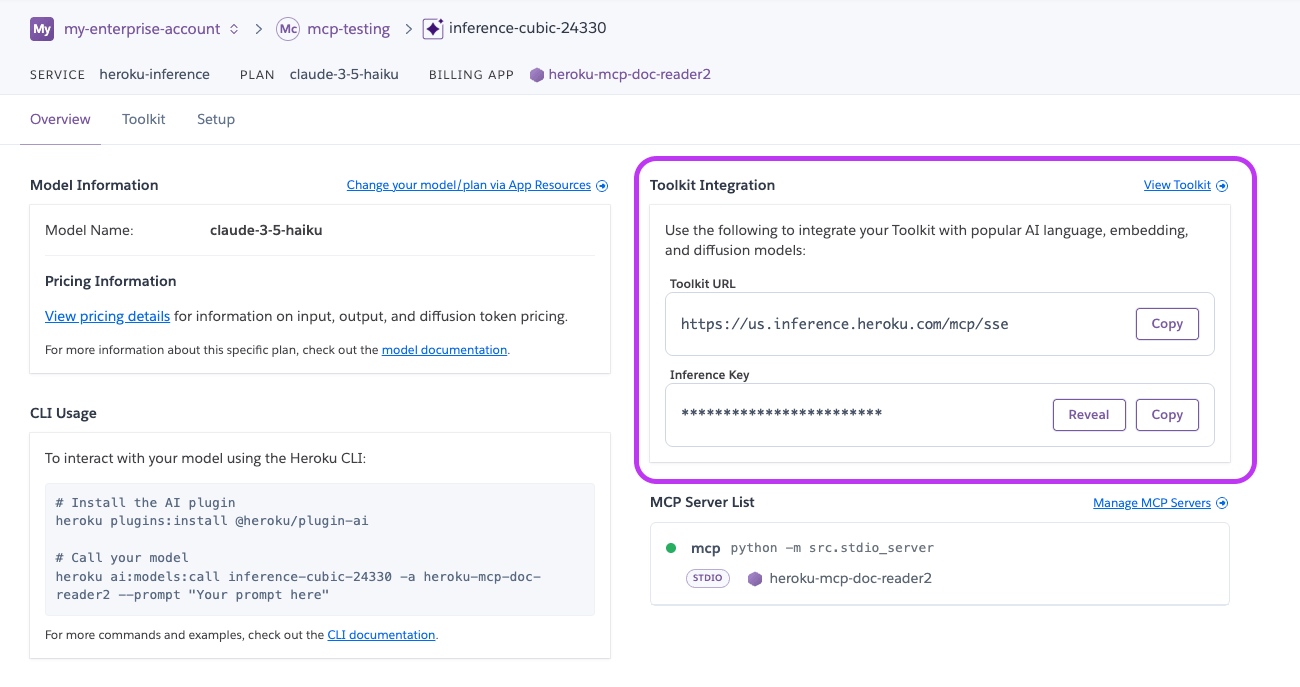

]]>Heroku is introducing significant updates to Managed Inference and Agents. These changes focus on reducing developer friction, expanding model catalogue, and streamlining deployment workflows.

More flexibility with the new standard plan

Until now, Heroku’s model-based plans required developers to provision a specific add-on for a specific model. This created significant operational overhead. If you wanted to experiment with a different model or implement a fallback strategy, you had to provision a new add-on and manage multiple config variables.

We have added a new standard plan for Heroku Managed Inference and Agents.

With this update, a single add-on and a single API key grant access to our entire catalog of supported models. You no longer need to reprovision resources to switch from a smaller model to a high-reasoning model. Instead, you simply update the model name in your code. This unified approach improves developer experience and allows for more robust application architectures. Try the standard mode using the following CLI command:

$ heroku addons:create heroku-inference:standard -a $APPNAME

New frontier models and an expanded open-weight catalog

Claude 4.6 models

Heroku now supports the Claude 4.6 family, the most capable models in the Claude family, designed for high-complexity workloads.

- Claude Opus 4.6: Designed for advanced software development, complex agentic workflows, and long-horizon planning.

- Claude Sonnet 4.6: High-performing model that is ideal as a daily driver and sophisticated financial analysis.

Open-weight models

We have also expanded our catalog with five new open-weight models to provide more cost-effective options for diverse use cases.

- DeepSeek v3.2: Advanced model built for high-efficiency agentic reasoning and long-context understanding.

- Kimi K2.5: Optimized for massive context processing, advanced mathematical reasoning, and complex agent swarms.

- MiniMax M2.1: Specialized for practical engineering and multi-language full-stack application building.

- ZAI GLM 4.7: Industry-leading model for reliable tool-calling and vibe coding visually aesthetic front-ends.

- ZAI GLM 4.7 Flash: A lightweight model optimized for speed, cost-efficiency, and agentic workflows where responsiveness is critical.

Embed models

We are enhancing our support for vector-based search and retrieval with a new Cohere Embed V4 model. The latest generation of Cohere’s embedding technology is built for higher accuracy and complex document analysis.

- Cohere Embed V4: Specifically designed to understand conceptual relationships rather than just keyword matching.

Model deprecation notice

As we transition to these next-generation models, we are beginning the deprecation process for older versions, including Claude 3.5, Claude 3.7, and Claude 4. Users are encouraged to migrate to Claude 4.5 and 4.6 to ensure continued support and optimal performance.

Build better with Heroku AI

The shift to a standard plan and the addition of new frontier models like Claude Opus 4.6 represent Heroku’s commitment to providing access to a wide model catalogue. By improving developer experience and expanding model choice, we are making it easier than ever to build, scale, and optimize AI-powered applications.

To get started, visit the Heroku Dev Center or provision the new standard plan for Heroku Managed Inference and Agents today.

The post Whats New in Heroku AI: New Models and a Flexible Standard Plan appeared first on Heroku.

]]>Large language models are good at writing code. Data from Anthropic shows that allowing Claude to execute scripts, rather than relying on sequential tool calls, reduces token consumption by an average of 37%, with some use cases seeing reductions as high as 98%. Untrusted code needs a secure and isolated place to execute. We solved […]

The post Code Execution Sandbox for Agents on Heroku appeared first on Heroku.

]]>Large language models are good at writing code. Data from Anthropic shows that allowing Claude to execute scripts, rather than relying on sequential tool calls, reduces token consumption by an average of 37%, with some use cases seeing reductions as high as 98%.

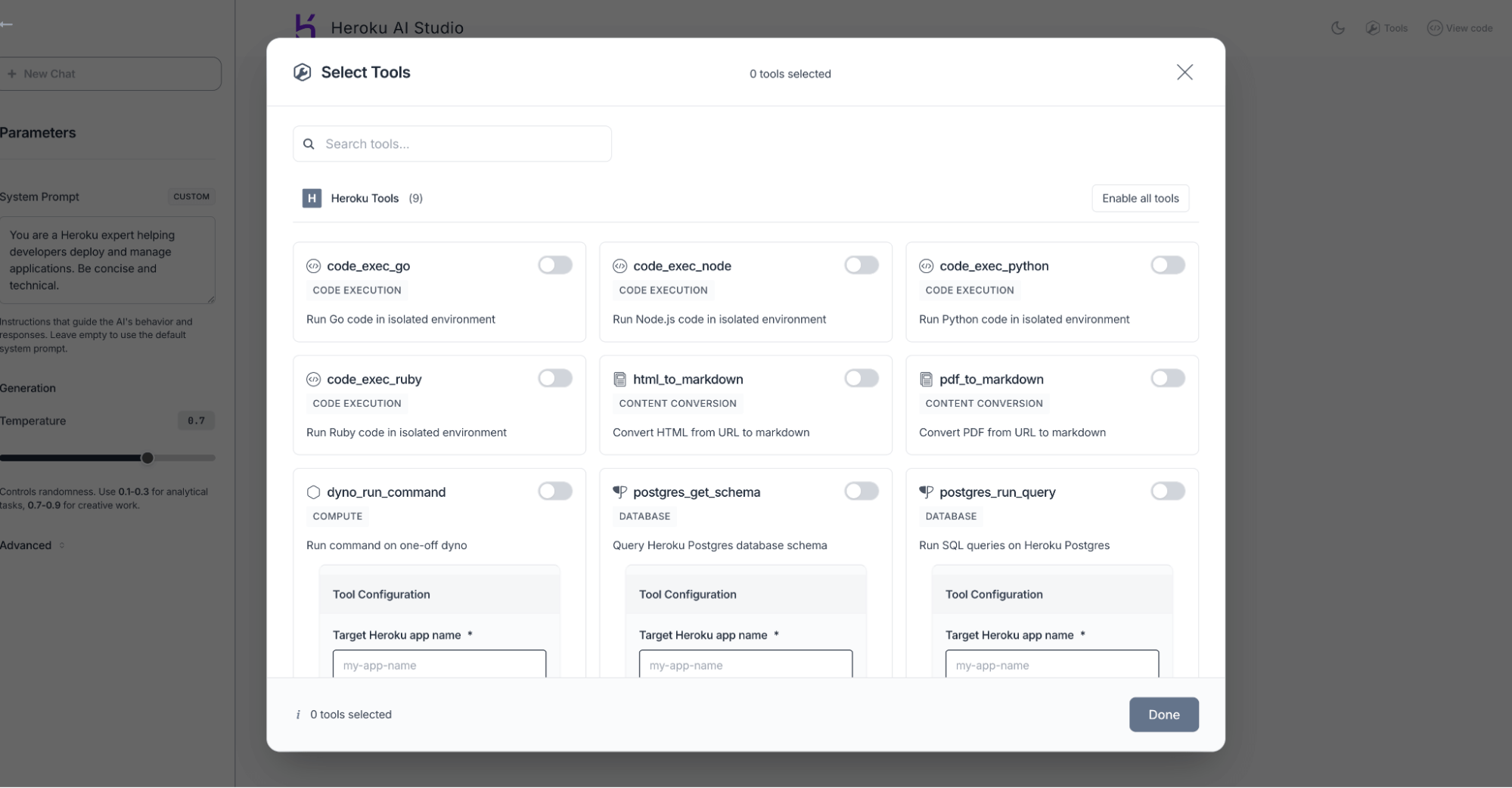

Untrusted code needs a secure and isolated place to execute. We solved this with code execution sandboxes (powered by one-off dynos), launched alongside Heroku Managed Inference and Agents in May 2025.

You can leverage these sandboxes in two ways:

- Built-in tools, within our Managed Inference and Agents API

- MCP tool, by deploying our open-source Model Context Protocol (MCP) servers to connect the sandbox to any client, including Agentforce, Claude Desktop, or Cursor

How agents improve with code execution tools

Every tool definition and intermediate output is forced through the model’s context window. This is highly inefficient. For example, if you analyze a 10MB log file, the entire file consumes your context even if you only need a brief summary of the errors.

The better pattern, which Anthropic calls programmatic tool calling, lets the model write code that orchestrates everything.

If you’re using Salesforce and want to ask Agentforce to find at-risk deals in your Q1 pipeline, the agent writes a script that queries thousands of opportunities, cross-references activity history, filters for deals with no recent engagement, and returns just the 12 that need attention. The tool execution and reasoning and analysis can happen in the Heroku sandbox and only the summary hits the model’s context.

Isolation via one-off dynos

To execute untrusted code safely, we use one-off dynos. This is the same infrastructure that has been used for administrative or maintenance tasks on Heroku for over a decade. Because these dynos are spun up on demand and terminate after use, they provide a naturally isolated, cost-effective, and secure environment, which means the blast radius of LLM generated code is limited to an ephemeral container.

How to use the built-in tools

If you’re using the Managed Inference and Agents API, include code_exec_python (or code_exec_ruby, code_exec_node, code_exec_go) in your tool list:

curl "$INFERENCE\_URL/v1/agents/heroku" \\

-H "Content-Type: application/json" \\

-H "Authorization: Bearer $INFERENCE\_KEY" \\

-d '{

"model": "claude-4-sonnet",

"messages": [

{

"role": "user",

"content": "Calculate the standard deviation of [23, 45, 67, 12, 89, 34, 56, 78, 90, 11]"

}

],

"tools": [

{

"type": "heroku_tool",

"name": "code_exec_python"

}

]

}'

The agent writes Python, we execute it in a dyno, and stream back the result:

{

"choices": [

{

"message": {

"role": "assistant",

"content": "The standard deviation is 30.19. Here's what I calculated:\n\nMean: 50.5\nVariance: 911.39\nStd Dev: 30.19\n\nThe data has fairly high spread - values range from 11 to 90."

}

}

]

}

You can pass runtime_params with max_calls to limit how many times the tool runs during a single agent loop.

Deploying your own code execution MCP server

For Agentforce, Claude Desktop, Cursor, or custom frameworks, deploy the MCP server directly:

git clone https://github.com/heroku/mcp-code-exec-python

cd mcp-code-exec-python

heroku create my-sandbox

heroku config:set API_KEY=$(openssl rand -hex 32)

git push heroku main

The server implements the Model Context Protocol. Point your client at it and you get the same sandboxed execution. We have implementations for Python, Ruby, Node, and Go. Each repo has a deploy button if you prefer one-click setup.

Start building more powerful, efficient AI agents by trying out our code execution sandboxes today.

The post Code Execution Sandbox for Agents on Heroku appeared first on Heroku.

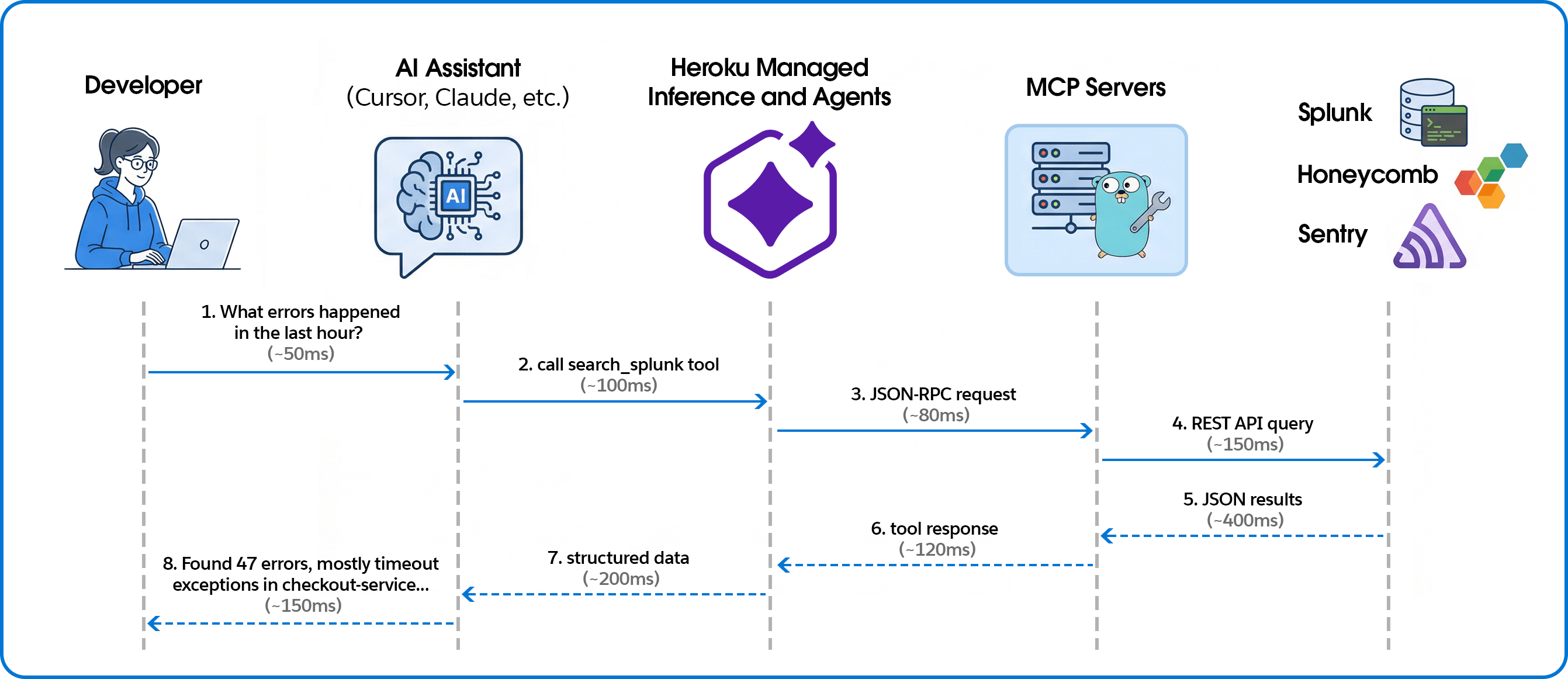

]]>If you’ve ever debugged a production incident, you know the drill: IDE on one screen, Splunk on another, Sentry open in a third tab, frantically copying error messages between windows while your PagerDuty keeps buzzing. You ask “What errors spiked in the last hour?” but instead of an answer, you have to context-switch, recall complex […]

The post Building AI-Powered Observability with Heroku Managed Inference and Agents appeared first on Heroku.

]]>If you’ve ever debugged a production incident, you know the drill: IDE on one screen, Splunk on another, Sentry open in a third tab, frantically copying error messages between windows while your PagerDuty keeps buzzing.

You ask “What errors spiked in the last hour?” but instead of an answer, you have to context-switch, recall complex query syntax, and mentally correlate log timestamps with your code. By the time you find the relevant log, you’ve lost your flow. Meanwhile the incident clock keeps ticking away.

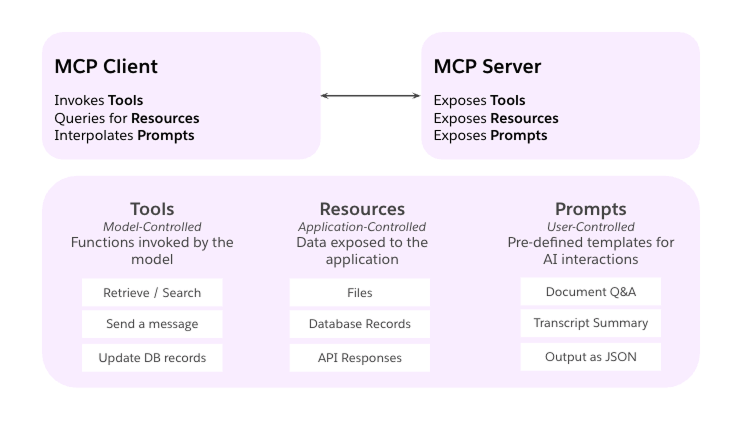

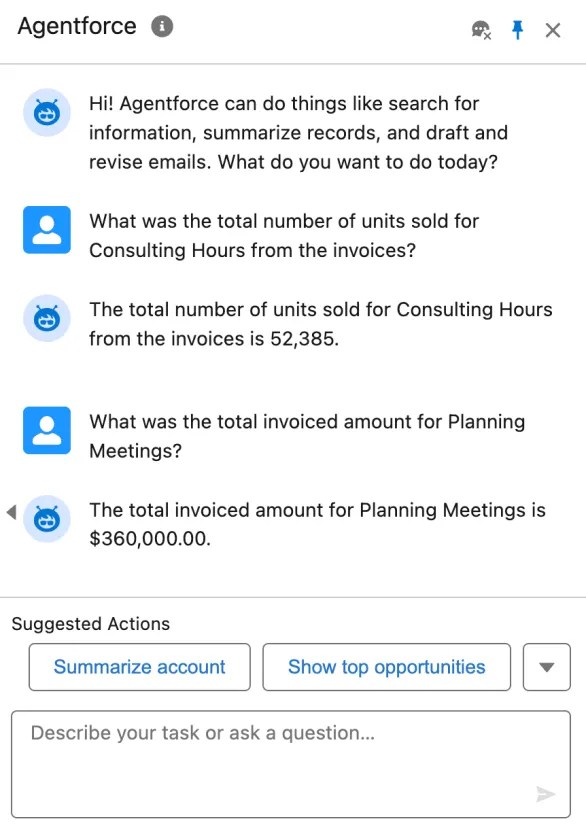

The workflow below fixes that broken loop. We’ll show you how to use the Model Context Protocol (MCP) and Heroku Managed Inference and Agents to pipe those observability queries directly into your IDE, turning manual hunting into instant answers.

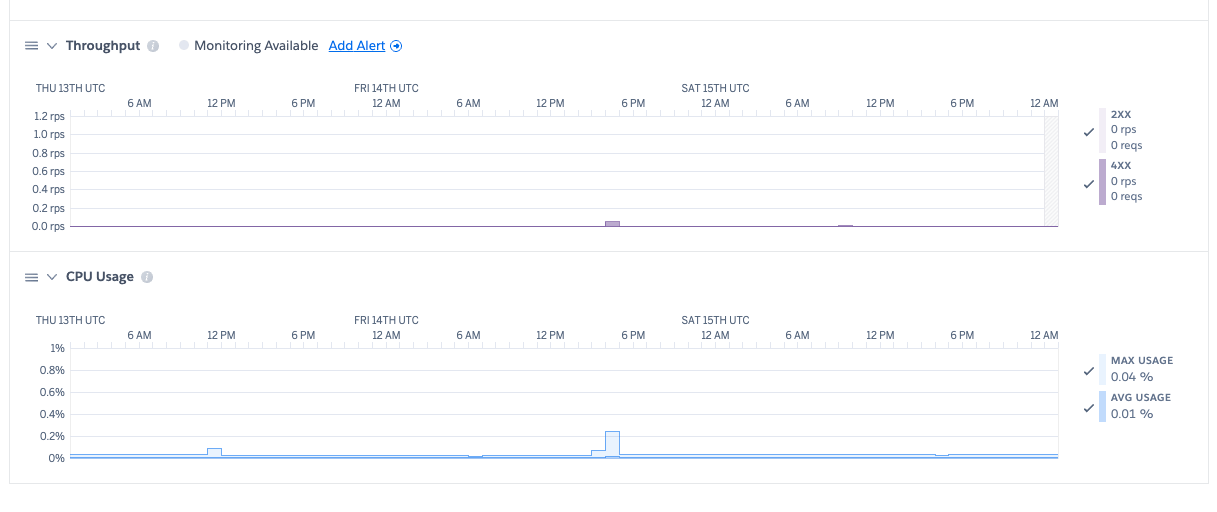

Connecting telemetry to code for AI-powered observability

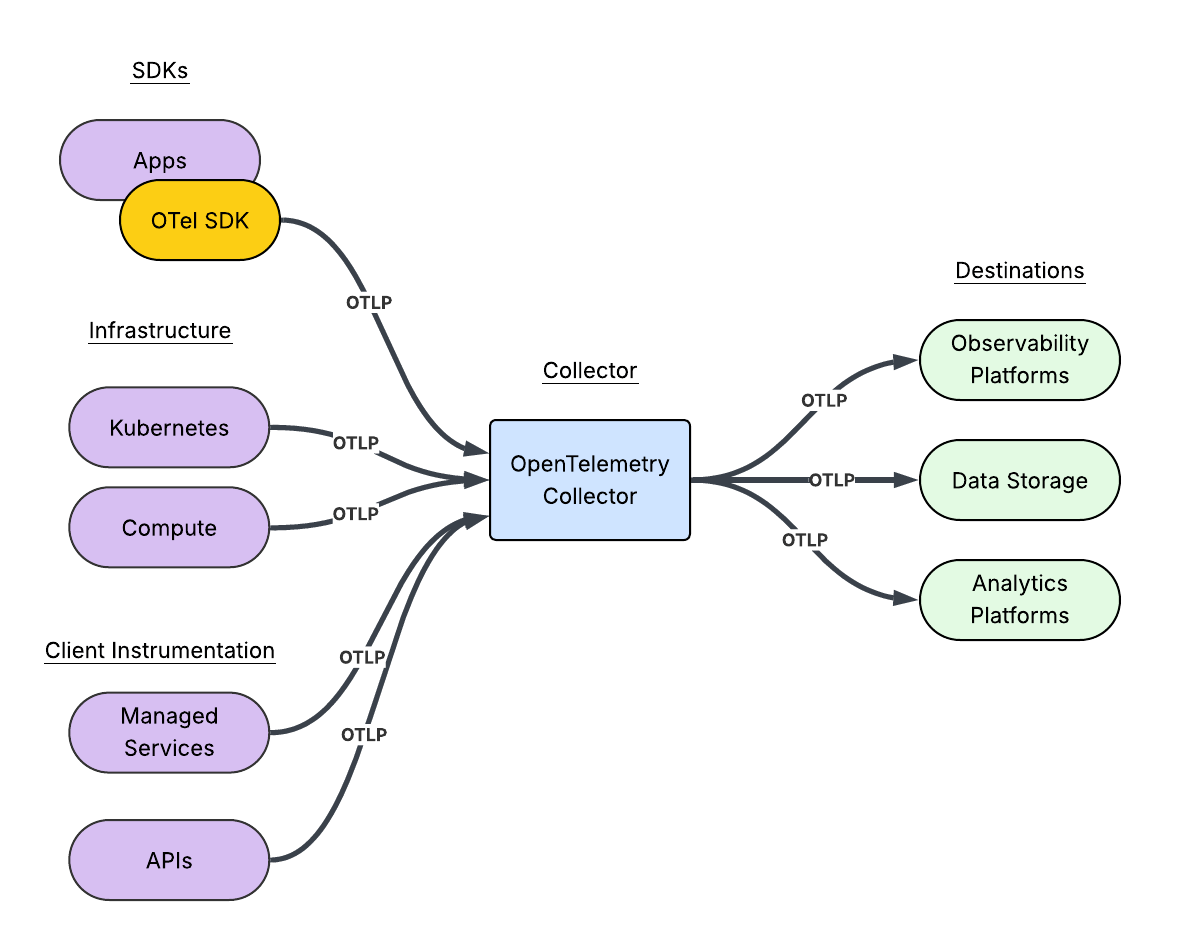

The system connects AI coding assistants to observability platforms through the Model Context Protocol (MCP), with Managed Inference and Agents handling the transport layer.

Building the MCP servers

Unified tool interface

We expose each observability platform through MCP’s consistent tool interface. Here’s how we define a Splunk search tool:

searchTool := mcp.NewTool("search_splunk",

mcp.WithDescription("Execute a Splunk search query and return the results."),

mcp.WithString("search_query", mcp.Description("The search query to execute")),

mcp.WithString("earliest_time", mcp.Description("Start time for the search")),

mcp.WithString("latest_time", mcp.Description("End time for the search")),

mcp.WithNumber("max_results", mcp.Description("Maximum number of results")),

)

The AI assistant sees this as a callable tool with typed parameters. When a user asks about errors, the assistant decides which tool to call and constructs the appropriate arguments.

Handling tool calls

Tool handlers translate MCP requests into platform-specific API calls:

s.AddTool(searchTool, func(ctx context.Context, request mcp.CallToolRequest) (*mcp.CallToolResult, error) {

searchQuery, _ := request.RequireString("search_query")

earliestTime := request.GetString("earliest_time", "-24h")

latestTime := request.GetString("latest_time", "now")

maxResults := request.GetInt("max_results", 100)

results, err := client.Search(ctx, searchQuery, earliestTime, latestTime, maxResults)

if err != nil {

return mcp.NewToolResultText(fmt.Sprintf("Error: %v", err)), nil

}

resultData, _ := json.Marshal(results)

return mcp.NewToolResultText(string(resultData)), nil

})

Multi-platform support

The same pattern works across observability platforms. For Honeycomb, we expose dataset queries with filters and breakdowns:

queryTool := mcp.NewTool("query_honeycomb",

mcp.WithDescription("Execute a Honeycomb query with filters and breakdowns"),

mcp.WithString("dataset", mcp.Description("The dataset to query")),

mcp.WithString("calculation", mcp.Description("COUNT, AVG, P99, etc.")),

mcp.WithString("filter_column", mcp.Description("Column to filter on")),

mcp.WithString("filter_value", mcp.Description("Value to filter for")),

)

For Sentry, in addition to Sentry tools, we enabled direct event lookup from URLs—paste a Sentry link and get the full JSON:

eventTool := mcp.NewTool("get_sentry_event",

mcp.WithDescription("Get event by URL or ID - paste Sentry event URL to fetch full JSON"),

mcp.WithString("event_url_or_id", mcp.Description("Sentry event URL or event ID")),

)

Deploying with Heroku Managed Inference and Agents

Heroku Managed Inference and Agents provides an MCP gateway that handles the SSE transport layer, letting you deploy MCP servers as simple STDIO processes.

Create app, attach Add-on, configure, and deploy:

heroku create your-observability-mcp

heroku addons:create heroku-inference:claude-4-5-haiku -a your-observability-mcp

# Set credentials for your observability platform

heroku config:set YOUR_PLATFORM_CREDENTIALS -a your-observability-mcp

# Deploy

git push heroku main

Get the inference token:

heroku config:get INFERENCE_KEY -a your-observability-mcpTeam members add this to their Cursor or Claude configuration:

{

"mcpServers": {

"splunk": {

"command": "npx",

"args": ["-y", "mcp-remote", "https://us.inference.heroku.com/mcp/sse",

"--header", "Authorization:Bearer YOUR_INFERENCE_TOKEN"]

}

}

}

Contextualizing error spikes

In a traditional dashboard, you see a red bar. With MCP, you get an answer. We asked the agent, “What error types are most common in production today?” and it returned the ranked list below.

| Rank | Error Type | Count | Primary Source |

|---|---|---|---|

| 1 | TimeoutException | 847 | checkout-service, payment processing |

| 2 | ConnectionRefused | 312 | database pool exhaustion, redis |

| 3 | NullPointerException | 156 | user-profile-api, missing field handling |

| 4 | RateLimitExceeded | 98 | external-api-gateway, third-party calls |

| 5 | AuthenticationFailed | 67 | session-service, expired tokens |

| 6 | ResourceNotFound | 54 | inventory-api, stale cache references |

| 7 | CircuitBreakerOpen | 41 | payments-api, downstream failures |

| 8 | DeserializationError | 28 | webhook-processor, malformed payloads |

While the distribution might look standard, the AI can help you interpret the security implications. For example, the AI can correlate a rise in AuthenticationFailed errors with specific geographic regions to confirm a brute-force attempt or credential attack, or identify that RateLimitExceeded errors are coming from a single subnet. This context transforms a generic “error count” into actionable security intelligence.

Why AI-native observability changes the game

Connecting your observability stack to your IDE via MCP does more than just save you a few clicks; it keeps you in the flow during an incident. By letting Heroku Managed Inference and Agents handle the proprietary query syntax, any engineer can interrogate production data as easily as a platform specialist. Why this works better:

- Automate complex security audits: We processed 175,000+ events in minutes to clear a suspicious account flag, turning hours of manual log analysis into a single natural language question.

- Bypass the syntax barrier: Engineers ask questions in natural language instead of wrestling with complex SPL or Honeycomb queries. No one needs to remember platform-specific syntax at 2 AM.

- Deploy “Day One” observability: New hires can query production state immediately without mastering your specific observability stack or acronyms. The AI translates intent into execution.

- Debug directly in context: Stop toggling between IDE and browser. By pulling telemetry into your local environment, you keep your mental model intact and fix issues where the code lives..

- Instant root cause analysis: Simply paste a URL to get an immediate root cause analysis with suggested fixes, skipping the manual correlation step entirely.

Extend your AI assistant with any API

Moving from siloed observability tools to an AI-integrated debugging workflow requires bridging the gap between platforms and your IDE. We built this using Heroku Managed Inference and Agents and the Model Context Protocol, and the same pattern works for any API you want to bring into your AI assistant.

Whether it’s observability, internal tools, or customer data — if you can call an API, you can expose it as an MCP tool. Heroku Managed Inference and Agents handles the transport, authentication, and hosting. You focus on the integration.

What will you build? Get started with Heroku Managed Inference and Agents

The post Building AI-Powered Observability with Heroku Managed Inference and Agents appeared first on Heroku.

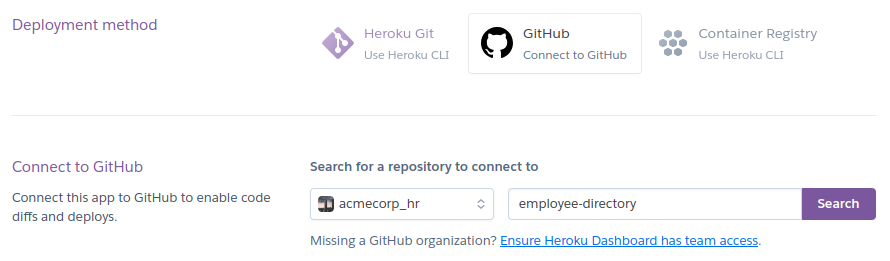

]]>Today, we are thrilled to announce the General Availability (GA) of the Heroku GitHub Enterprise Server Integration. For our Enterprise customers, the bridge between code and production must be more than just convenient. It must be resilient, secure, and governed at scale. While our legacy OAuth integration served us well, the modern security landscape demands […]

The post Heroku and GitHub Enterprise Server: Stronger Security, Seamless Delivery appeared first on Heroku.

]]>Today, we are thrilled to announce the General Availability (GA) of the Heroku GitHub Enterprise Server Integration.

For our Enterprise customers, the bridge between code and production must be more than just convenient. It must be resilient, secure, and governed at scale. While our legacy OAuth integration served us well, the modern security landscape demands a shift away from personal credentials toward managed service identities.

Why switch to the GitHub Apps integration?

This new integration is built on GitHub Apps, moving beyond the limitations of personal OAuth tokens to provide a more robust connection for mission-critical pipelines.

- Decoupled authentication: Historically, if the developer who set up a pipeline left the organization, the deployment would break. With this integration, the GitHub App acts as its own identity. Your CI/CD pipelines remain stable regardless of personnel changes.

- Granular security: GitHub Apps offer superior permission control compared to broad OAuth scopes. You can allowlist specific repositories and define exactly what Heroku can see and do.

- Zero service accounts: You no longer need to manage and pay for a separate “bot user” to act as a service account. The GitHub App acts on its own behalf, reducing overhead and security surface area.

Strategic benefits for DevOps teams

By moving to this integration, you unlock the full power of Heroku Flow for your private GitHub Enterprise Server instances:

- Enhanced CI/CD automation: Seamlessly link your GitHub Enterprise repositories to Heroku Pipelines to orchestrate the flow of code from staging to production. Ensure that your GitHub Actions pass successfully before any code is automatically deployed, maintaining a high bar for production stability.

- Review apps for every PR: Give your stakeholders and QA teams instant, isolated environments to test feature branches, fully integrated within your GitHub Enterprise Server firewall.

- Repeatable “golden paths”: When combined with Terraform, you can now programmatically provision Heroku Apps that are automatically linked to your Enterprise repos via a secure, organization-level handshake.

- Enterprise governance: Admins gain a “single pane of glass” view in the Heroku Enterprise Account settings to see all authorized organizations and manage repo access across the entire fleet of applications.

Getting started

The integration is available today for all Heroku Enterprise customers. Because this is an organization-level change, we recommend a phased rollout:

- Step 1: Enable for testing. Reach out to Heroku Support to enable the feature for a specific test team.

- Step 2: Connect. Navigate to your Enterprise Account Settings tab to link your GitHub Enterprise Server URL.

- Step 3: Reconfigure. Update your existing pipelines to use the new connection.

For a step-by-step walkthrough, including prerequisites and limitation details, visit our official Dev Center documentation.

The post Heroku and GitHub Enterprise Server: Stronger Security, Seamless Delivery appeared first on Heroku.

]]>Today, Heroku is transitioning to a sustaining engineering model focused on stability, security, reliability, and support. Heroku remains an actively supported, production-ready platform, with an emphasis on maintaining quality and operational excellence rather than introducing new features. We know changes like this can raise questions, and we want to be clear about what this means […]

The post An Update on Heroku appeared first on Heroku.

]]>Today, Heroku is transitioning to a sustaining engineering model focused on stability, security, reliability, and support. Heroku remains an actively supported, production-ready platform, with an emphasis on maintaining quality and operational excellence rather than introducing new features. We know changes like this can raise questions, and we want to be clear about what this means for customers.

There is no change for customers using Heroku today. Customers who pay via credit card in the Heroku dashboard—both existing and new—can continue to use Heroku with no changes to pricing, billing, service, or day-to-day usage. Core platform functionality, including applications, pipelines, teams, and add-ons, is unaffected, and customers can continue to rely on Heroku for their production, business-critical workloads.

Enterprise Account contracts will no longer be offered to new customers. Existing Enterprise subscriptions and support contracts will continue to be fully honored and may renew as usual.

Why this change

We’re focusing our product and engineering investments on areas where we can deliver the greatest long-term customer value, including helping organizations build and deploy enterprise-grade AI in a secure and trusted way.

The post An Update on Heroku appeared first on Heroku.

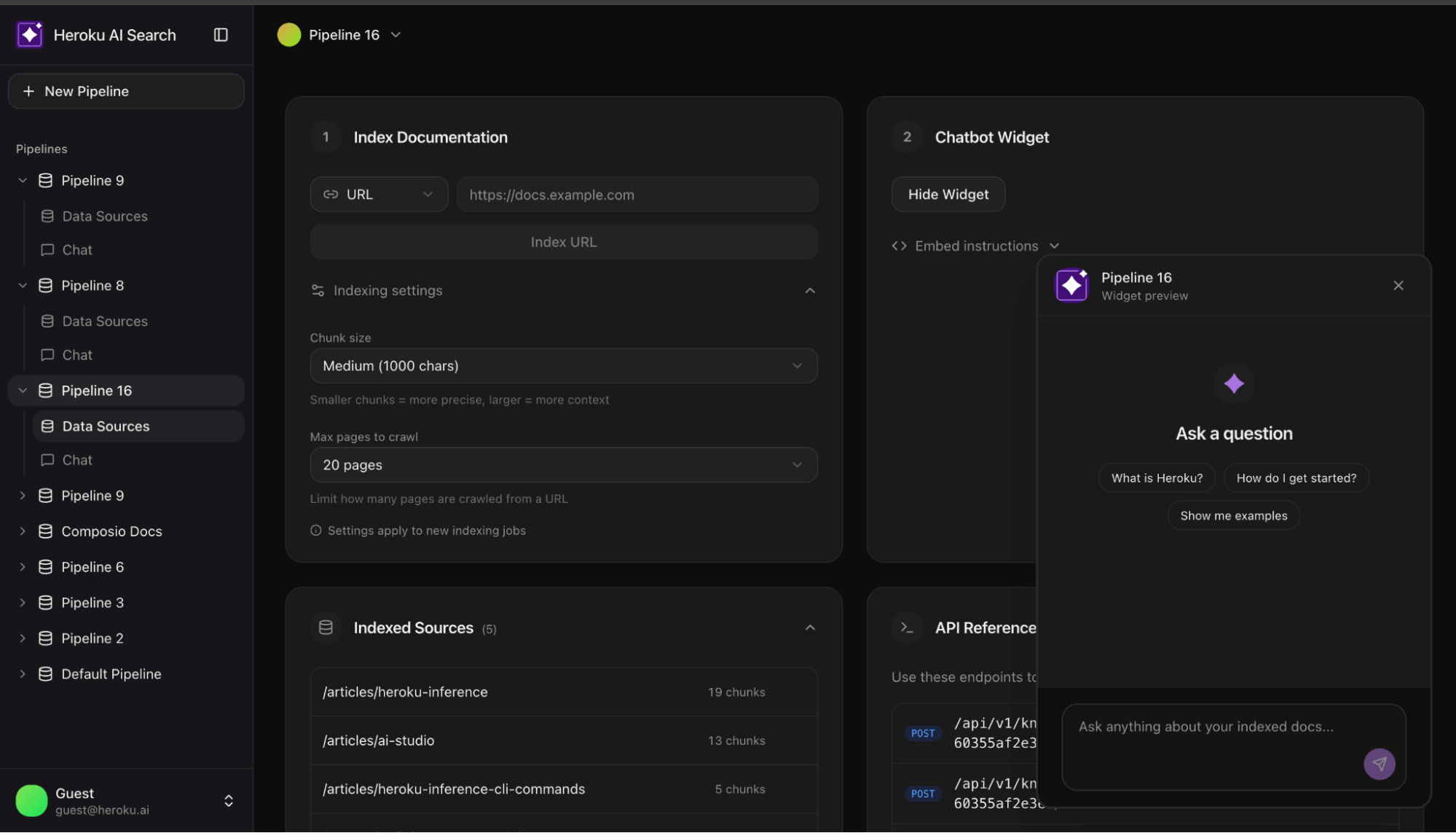

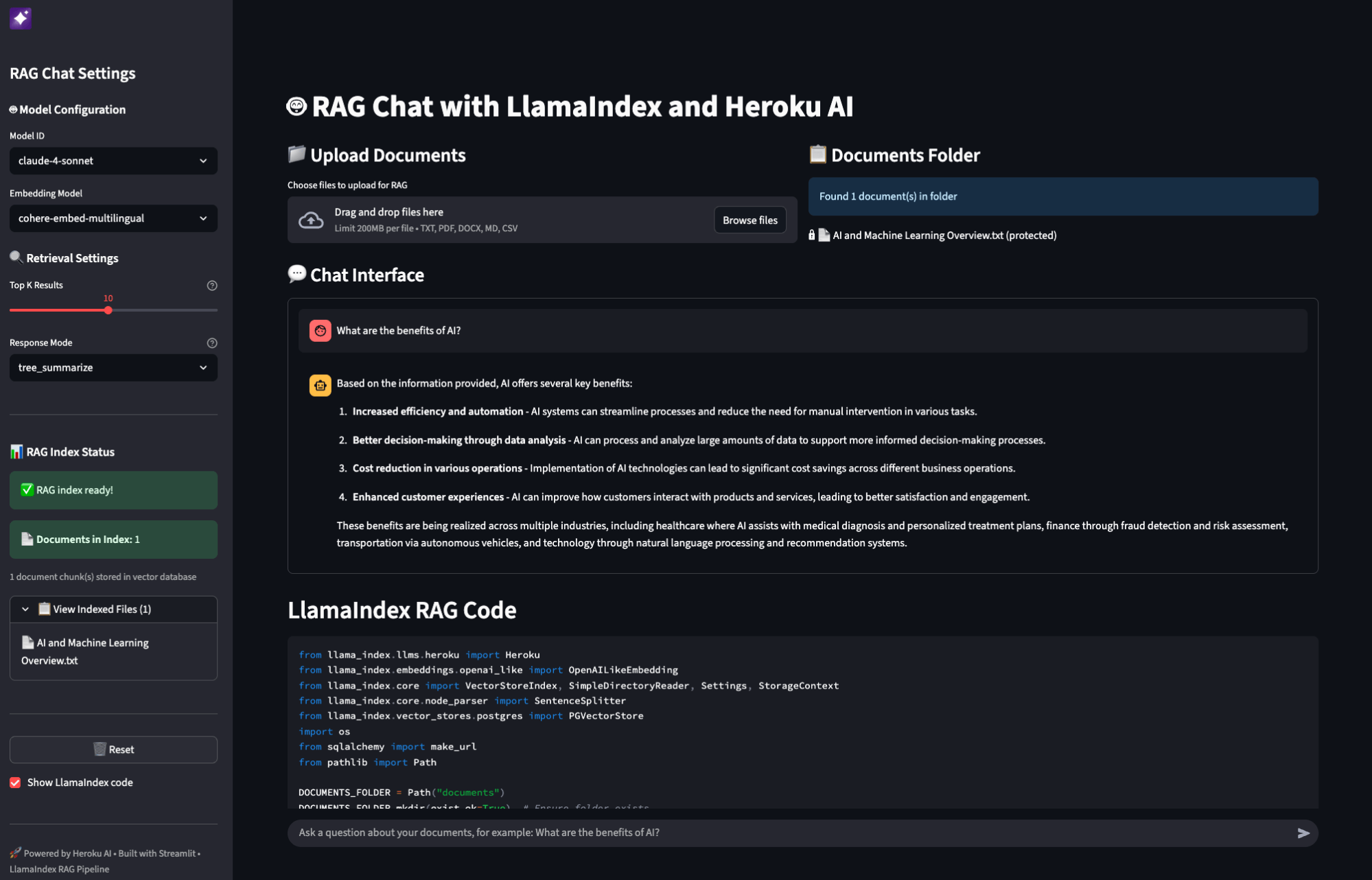

]]>If you’ve built a RAG (Retrieval Augmented Generation) system, you’ve probably hit this wall: your vector search returns 20 documents that are semantically similar to the query, but half of them don’t actually answer it. A user asks “how do I handle authentication errors?” and gets back documentation about authentication, errors, and error handling in […]

The post Building AI Search on Heroku appeared first on Heroku.

]]>If you’ve built a RAG (Retrieval Augmented Generation) system, you’ve probably hit this wall: your vector search returns 20 documents that are semantically similar to the query, but half of them don’t actually answer it.

A user asks “how do I handle authentication errors?” and gets back documentation about authentication, errors, and error handling in embedding space, but only one or two are actually useful.

This is the gap between demo and production. Most tutorials stop at vector search. This reference architecture shows what comes next. This AI Search reference app shows you how to build a production grade enterprise AI search using Heroku Managed Inference and Agents.

Why two-stage retrieval

Vector embeddings are coordinates in high dimensional space. Documents close together share semantic meaning. Semantic proximity is a false proxy for accuracy; a document can be ‘close’ in vector space but fail to provide a factual answer. You need a second stage where a reranking model scores each one against the actual query. It asks: “Does this document answer this question?” rather than “Is this document about similar things?”

The difference in result quality is significant. This reference implementation shows how to wire it up and how to make it optional when latency matters more than precision.

Architecture overview

The system consists of two primary pipelines:

- Indexing pipeline: URL → Crawl → Chunk → Embed → pgvector

- Query pipeline: Question → Embed → Vector Search → Rerank → Claude → Answer

Heroku services

| Component | Service | Role |

| Embeddings | Heroku Managed Inference and Agents | Converts text to vectors (Cohere Embed Multilingual) |

|---|---|---|

| Reranking | Heroku Managed Inference and Agents | Scores query document relevance (Cohere Rerank 3.5) |

| Generation | Heroku Managed Inference and Agents | Produces answers from context (Claude 3.5 Sonnet) |

| Storage | Heroku Postgres + pgvector | Stores vectors, runs similarity queries |

Streamlining the stack: Unified provider setup

const heroku = createHerokuAI({

chatApiKey: process.env.HEROKU_INFERENCE_TEAL_KEY,

embeddingsApiKey: process.env.HEROKU_INFERENCE_GRAY_KEY,

rerankingApiKey: process.env.HEROKU_INFERENCE_BLUE_KEY,

});

Indexing: Getting documents in

Crawling real-world sites

Documentation sites are messy. Naive scraping often extracts more navigation links than actual content. The crawler uses a simple heuristic to detect junk:

function isLikelyJunkContent(content: string, htmlLength: number): boolean {

// If HTML is huge but text is tiny, it's likely boilerplate

if (htmlLength > 100000 && content.length < htmlLength * 0.05) return true; const navPatterns = ['sign in', 'login', 'menu', 'pricing']; // If the start of the doc is stuffed with nav links return navPatterns.filter(p => content.slice(0, 500).includes(p)).length >= 4;

}

Chunking at natural boundaries

Documents are split into 1000 character chunks with 200 characters of overlap. To avoid losing meaning, we prioritize splitting at paragraph or sentence breaks.

Batch storage with pgvector

The unnest function allows inserting hundreds of chunks in a single SQL query:

INSERT INTO chunks (pipeline_id, url, title, content, embedding)

SELECT

${pipelineId}::uuid,

unnest(${urls}::text[]),

unnest(${titles}::text[]),

unnest(${contents}::text[]),

unnest(${embeddings}::vector[])

Retrieval: The two-stage pattern

Stage 1: Vector search

Retrieve the top 20 chunks by cosine similarity. This is fast and scales well in Postgres.

SELECT content, 1 - (embedding <=> ${vector}::vector) as similarity

FROM chunks

ORDER BY embedding <=> ${vector}::vector

LIMIT 20

Stage 2: Semantic reranking

Pass those 20 candidates to a reranking model. Rerankers use a cross encoder architecture, processing the query and document together to score relevance accurately.

const reranked = await rerankModel.doRerank({

query,

documents: { type: "text", values: chunks.map(c => c.content) },

topN: 5

});

Streaming: Responsive UX

RAG involves multiple steps (Embed → Search → Rerank → Generate). To prevent a “blank screen,” use Server Sent Events (SSE) to stream progress:

send("step", { step: "embedding" });

send("step", { step: "searching" });

send("step", { step: "reranking" });

send("sources", { sources }); // Show sources while LLM generates

for await (const chunk of streamRAGResponse(message, context)) {

send("text", { content: chunk });

}

Conclusion: Deploy the reference app

Moving from a demo to a production grade RAG system requires bridging the gap between similarity and relevance. This architecture provides a battle tested foundation using Heroku Managed Inference and Agents and pgvector to ensure your search results actually answer user questions. Deploy the reference chatbot today to see how two-stage retrieval transforms your documentation search.

The post Building AI Search on Heroku appeared first on Heroku.

]]>Today, we are announcing the general availability of reranking models on Heroku Managed Inference and Agents, featuring support for Cohere Rerank 3.5 and Amazon Rerank 1.0. Semantic reranking models score documents based on their relevance to a specific query. Unlike keyword search or vector similarity, rerank models understand nuanced semantic relationships to identify the most […]

The post Optimize Search Precision with Reranking on Heroku AI appeared first on Heroku.

]]>Today, we are announcing the general availability of reranking models on Heroku Managed Inference and Agents, featuring support for Cohere Rerank 3.5 and Amazon Rerank 1.0.

Semantic reranking models score documents based on their relevance to a specific query. Unlike keyword search or vector similarity, rerank models understand nuanced semantic relationships to identify the most relevant documents for a given question. Reranking acts as your RAG pipeline’s high-fidelity filter, decreasing noise and token costs by identifying which documents best answer the specific query.

Implement two-stage retrieval with the Heroku Rerank API

The Heroku Managed Inference API is designed to be compatible with the Cohere format. Integrate reranking into your existing RAG (retrieval augmented generation) stack by sending a request to the /v1/rerank endpoint.

To get started, provision a model via the Heroku CLI:

heroku ai:models:create -a your-app-name cohere-rerank-3-5 --as RERANK

Once the model is provisioned, you can set your environment variables and implement reranking with a simple request. In this example, we verify which technical documents best answer a query about database optimization:

const response = await fetch(`${process.env.RERANK_URL}/v1/rerank`, {

method: 'POST',

headers: {

'Authorization': `Bearer ${process.env.RERANK_KEY}`,

'Content-Type': 'application/json'

},

body: JSON.stringify({

model: process.env.RERANK_MODEL_ID,

query: 'How do I optimize database connection pooling?',

documents: [

'Connection pooling reduces overhead by reusing existing database connections.',

'You can monitor application performance using built-in metrics and logging tools.',

'Set max pool size based on your dyno count to prevent connection exhaustion.',

'Regular database backups are essential for disaster recovery planning.'

],

top_n: 2

})

});

const { results } = await response.json();

console.log(results);

/*

[

{ index: 0, relevance_score: 0.5948578715324402 },

{ index: 2, relevance_score: 0.42105236649513245 }

]

*/

Analyzing reranker relevance scores

The rerank endpoint returns a comprehensive result object that allows you to map scores back to your original data. Each item in the results array contains an index, which represents the original position of the document in the input array, and a relevance_score, which is a normalized float where higher values indicate better alignment with the query. This structure allows teams to set strict quality thresholds, only passing information to the AI agent if the reranker confirms it is highly relevant.

Top-N context filtering for accuracy and reduced cost

The top_n parameter allows you to limit the number of results returned. This is particularly useful for retrieving only the most relevant documents to keep your context window clean and reduce inference costs. If not specified, the API will return scores for all provided documents.

Regional availability and limits

To support global performance and data residency requirements, these models are available in both US and EU regions. Performance is managed through specific rate limits and transparent pricing models:

- Cohere Rerank 3.5 supports up to 250 requests per minute at $2.00 per 1,000 queries.

- Amazon Rerank 1.0 supports up to 200 requests per minute at $1.00 per 1,000 queries.

Next steps

By bringing managed reranking to Heroku, we are giving developers the tools to build enterprise-grade search and retrieval without the overhead of managing infrastructure. Visit the Heroku Managed Inference Documentation for full technical specifications and implementation guides.

The post Optimize Search Precision with Reranking on Heroku AI appeared first on Heroku.

]]>This month marks significant expansion for Heroku Managed Inference and Agents, directly accelerating our AI PaaS framework. We’re announcing a substantial addition to our model catalog, providing access to leading proprietary AI models such as Claude Opus 4.5, Nova 2, and open-weight models such as Kimi K2 thinking, MiniMax M2, and Qwen3. These resources are […]

The post Heroku AI: Accelerating AI Development With New Models, Performance Improvements, and Messages API appeared first on Heroku.

]]>This month marks significant expansion for Heroku Managed Inference and Agents, directly accelerating our AI PaaS framework. We’re announcing a substantial addition to our model catalog, providing access to leading proprietary AI models such as Claude Opus 4.5, Nova 2, and open-weight models such as Kimi K2 thinking, MiniMax M2, and Qwen3. These resources are fully managed, secure, and accessible via a single CLI command. We have also refreshed aistudio.heroku.com, please navigate to aistudio.heroku.com from your Managed Inference and Agents add-on to access the models you have provisioned.

Whether you are building complex reasoning agents or high-performance consumer applications, here’s what’s new in our platform. All of the open-weight models you access on Heroku are running on secure compute on AWS servers. Neither Heroku nor the model provider has access to your data and it is not used in training.

Expanding Heroku’s AI catalog with new state of the art models

Claude 4.5 models

We now support the full Claude 4.5 family in both US and EU regions, replacing the prior Claude 3 models which are scheduled for depreciation in January of 2026.

- Claude Opus 4.5: Designed for deep reasoning, complex task orchestration, and long-horizon planning. Recommended for demanding agentic workflows.

- Claude Sonnet 4.5: Balanced model for enterprise workloads, coding, and analysis.

- Claude Haiku 4.5: Low-latency model for high-volume tasks and classification.

Open-weight models

We have added several open-weights models to Heroku Managed Inference and Agents.

- Kimi K2 Thinking: Specialized for chain-of-thought processing, writing, and reasoning tasks.

- MiniMax M2: optimized for creative generation, roleplay, and coding agents.

- Qwen3 (235B & Coder 480B): Large models delivering exceptional performance as coding agents.

Nova models

- Amazon Nova 2 Lite: The Nova 2 family is now available, replacing the previous generation. These models provide updated multimodal capabilities and improved price-performance ratios.

Anthropic’s Messages API (Heroku preview)

Heroku now offers preview support for the Messages API format for all Anthropic models on Heroku. The API format is an alternative to the standard chatCompletions API and aligns with the Claude SDKs, enabling direct integration with Claude Code and the Claude Agents SDK.

Technical implementation and authentication

Authentication detail for the v1/messages endpoint, the authentication structure mirrors Anthropic’s standard practice. Set the value of your Heroku add-on’s INFERENCE_KEY as the value for the x-api-key HTTP header in your request.

Quickstart with Anthropic Python SDK

import os

from anthropic import Anthropic

inference_url = os.getenv("INFERENCE_URL")

inference_key = os.getenv("INFERENCE_KEY")

inference_model = os.getenv("INFERENCE_MODEL")

client = Anthropic(

api_key=inference_key,

base_url=inference_url

)

message = client.messages.create(

model=inference_model,

max_tokens=1024,

messages=[

{"role": "user", "content": "Hello, what should I build today?"}

]

)

Key Constraints for Developers

- Beta Features: We do not currently support the

anthropic-betaheader. - Claude Code: To ensure compatibility, set

CLAUDE_CODE_DISABLE_EXPERIMENTAL_BETAS=1. - Scope: The Messages API is exclusively available for Anthropic models.

Performance boost: automatic prompt caching

Heroku now caches system prompts and tool definitions to reduce latency on repeated requests. Prompt caching is enabled by default with no code changes required. Only system prompts and tool definitions are cached; user messages and conversation history are excluded and automatically expire to ensure privacy and security. You can disable caching for any request by adding a single HTTP header: X-Heroku-Prompt-Caching: false.

Lifecycle updates

Deprecations

- Claude 3 Family: The Claude 3 models (Sonnet 3.5, Sonnet 3.7, Haiku 3, and Haiku 3.5) will be deprecated as of Jan 30th, 2026. Workloads should migrate to the Claude 4.5 family.

- Nova 1st Gen: will be deprecated as of Feb 28th, 2026 in favor of Nova 2.

- Model Fallback: We are working on a default model fallback mechanism where if your model is deprecated, you’ll automatically switch over to a similar more recent model in the same family of models.

Heroku AI PaaS: Accelerating AI Development

This release brings state-of-the-art reasoning and efficient open-weight models to the Heroku platform. With the addition of prompt caching you can now optimize latency with minimal configuration. We recommend validating your applications with the Claude 4.5 and Nova 2 families ahead of the upcoming deprecation cycle. We would love to hear your feedback and feature requests, please reach out to [email protected].

The post Heroku AI: Accelerating AI Development With New Models, Performance Improvements, and Messages API appeared first on Heroku.

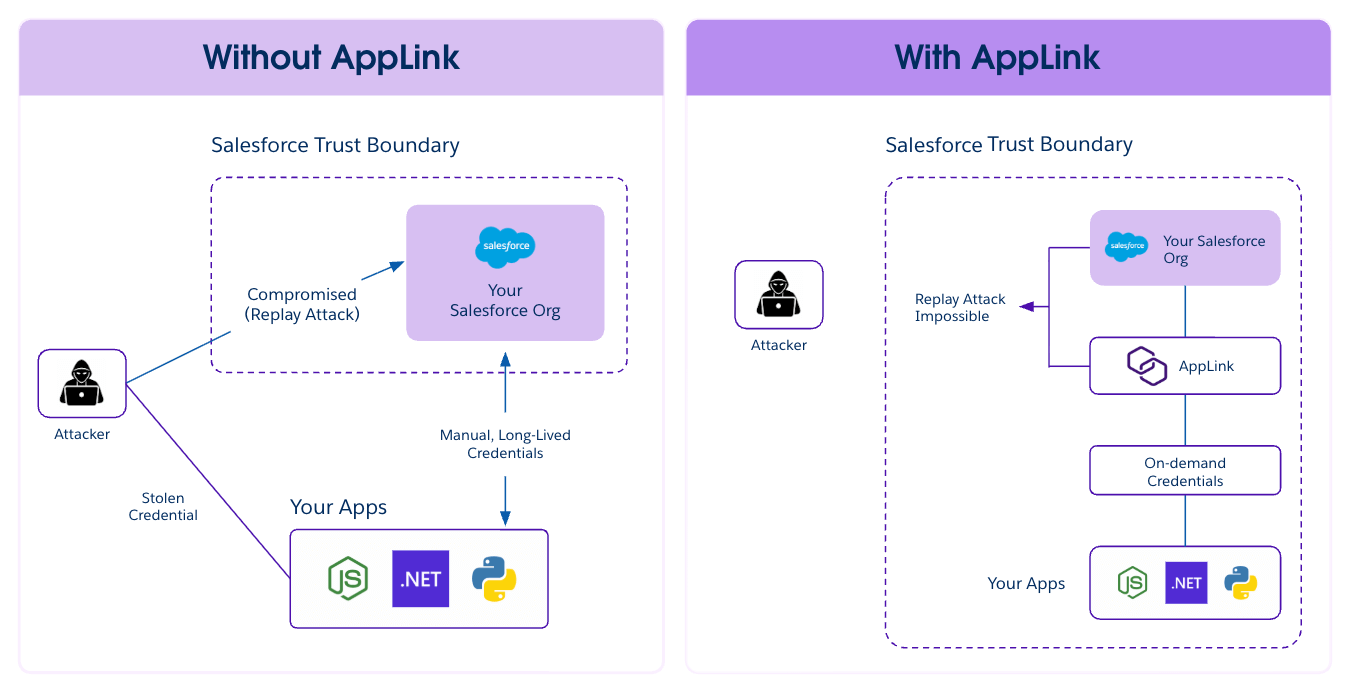

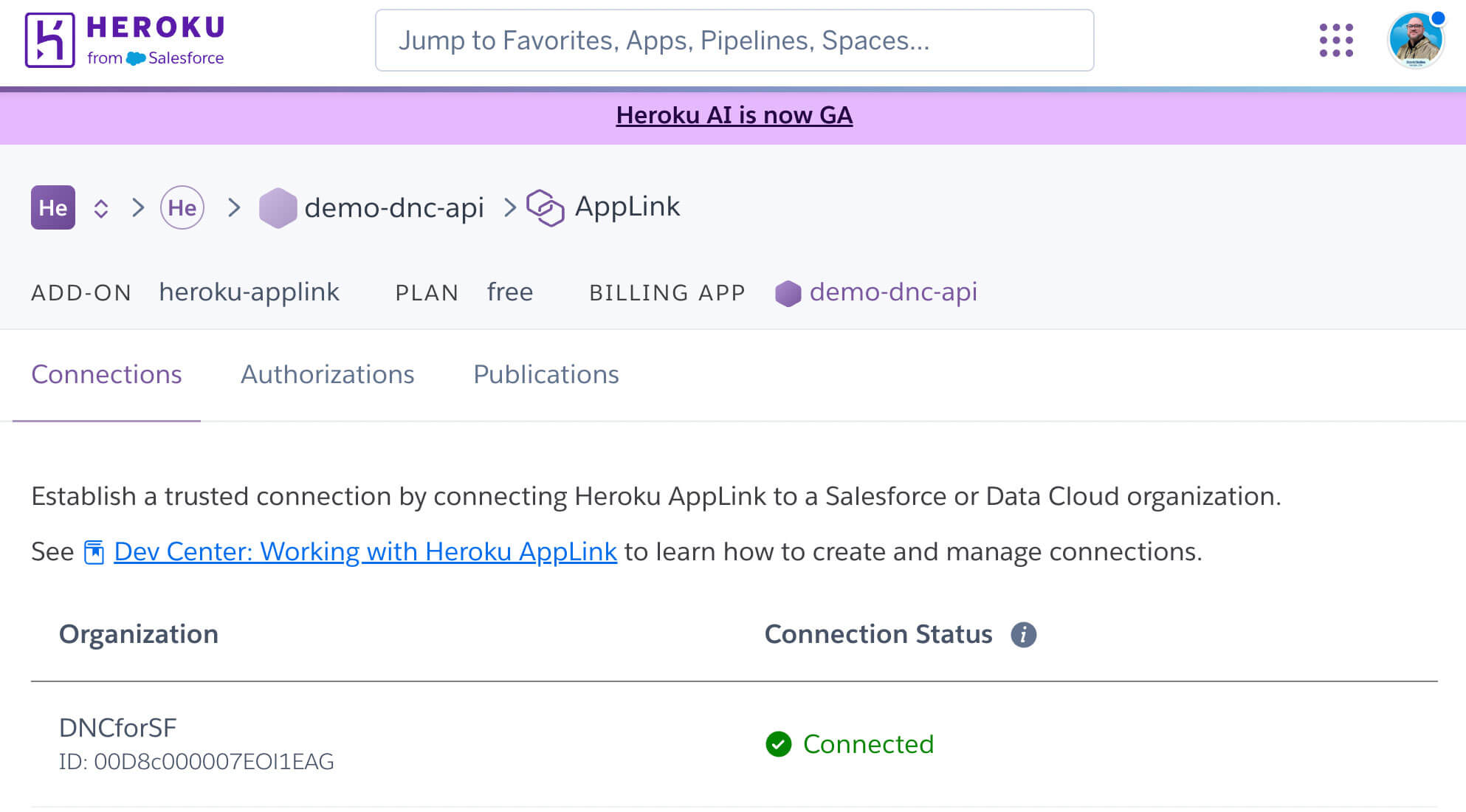

]]>Modern Continuous Integration/Continuous Deployment (CI/CD) pipelines demand machine-to-machine authorization, but traditional web-based flow requires manual steps and often rely on static credentials; a major security risk. Heroku AppLink now uses JWT Authorization to solve both: enabling automated setup and eliminating long-lived secrets. In today’s evolving threat landscape, security attacks increasingly exploit systems that rely on […]

The post Heroku AppLink: Now Using JWT-Based Authorization for Salesforce appeared first on Heroku.

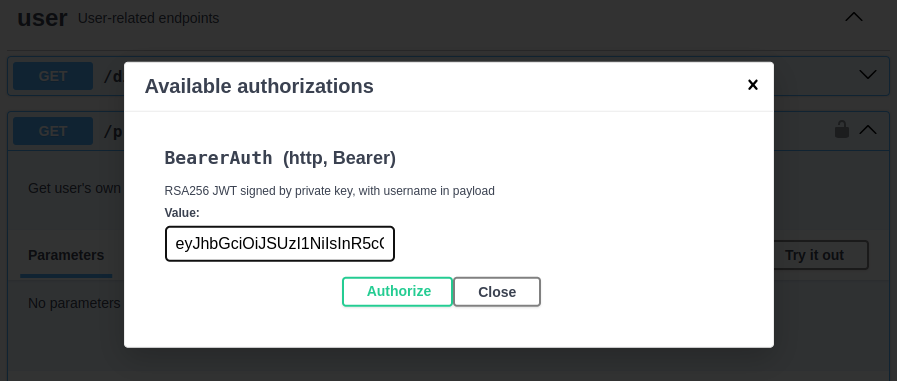

]]>Modern Continuous Integration/Continuous Deployment (CI/CD) pipelines demand machine-to-machine authorization, but traditional web-based flow requires manual steps and often rely on static credentials; a major security risk. Heroku AppLink now uses JWT Authorization to solve both: enabling automated setup and eliminating long-lived secrets.

In today’s evolving threat landscape, security attacks increasingly exploit systems that rely on long-lived access tokens or static credentials. If these credentials are compromised—for instance, if they are stolen from a configuration file or environment variable—attackers can reuse them for persistent, unauthorized access to sensitive data and systems. This vulnerability creates a major security risk that has recently impacted third-party applications across the industry.

Heroku AppLink is designed to deliver a modern, robust security posture by directly tackling this crucial vulnerability. With AppLink, you can secure your microservice integrations by moving away from storing and managing long-lived secrets. The architecture is simple and powerful: AppLink provides your microservice with on-demand tokens on demand. This significantly reduces the window of opportunity for an attacker because there is effectively nothing for them to steal or replay to gain long-term access. By switching to dynamic, on-demand credentials, you ensure your Salesforce data is protected in a microservice environment.

The shift: Why CI/CD demands JWT-based authorization

Historically, setting up the required authorization—establishing a trusted identity for your Heroku code to interact with Salesforce—relied solely on a web-based OAuth flow. While secure, this method required manual steps and posed a significant challenge for modern, automated deployment pipelines.

To enable true machine-to-machine communication, we’ve extended AppLink with JWT authorization, eliminating the manual steps required by traditional OAuth flows. This expands on the security model already in place, providing a secure, managed boundary between Salesforce and Heroku. Responding directly to feedback from our valued customers and partners who increasingly sought to automate their CI/CD pipelines, we have significantly enhanced AppLink to simplify this critical setup.

What is AppLink, and How JWT Authorization Works

Heroku AppLink is a secure managed boundary and service that fundamentally simplifies how you connect your Heroku microservices with your Salesforce org. It is specifically designed to deliver more robust security by moving away from storing and managing long-lived secrets. The architecture provides your microservice with on-demand tokens on demand, reducing the window of opportunity for an attacker to steal credentials or execute a replay attack. For more examples check out this article by Andy Fawcett, Heroku Alumni and veteran Salesforce MVP.

AppLink provides two distinct modes for secure authentication to accommodate various integration scenarios, from user-driven applications to automated background processes.

Web-based OAuth flow

This method is the standard for user-driven applications (like a Salesforce AppExchange app) where an end-user is present to log in and grant explicit, delegated access. While secure, this flow requires manual browser interaction and is not suitable for headless, automated deployment pipelines that require true machine-to-machine authentication.

JWT-based authorization (JSON web token)

This new option directly addresses the need for automation, and is highly recommended for server-to-server communication where a secure integration is essential. It allows you to seamlessly integrate the AppLink authorization setup into your CI/CD pipeline. The utilization of JWT authorization provides a robust and highly secure mechanism for authentication and authorization. JWTs are self-contained, digitally signed tokens, which allow your Heroku app to securely assert its identity and permissions when interacting with Salesforce APIs without needing to transmit credentials repeatedly. This approach is highly recommended for server-to-server communication where a seamless and secure integration is paramount.

Headless invocation of Salesforce agent

A headless invocation of Salesforce Agent is an available option for scenarios where the Heroku application needs to perform actions on behalf of a user or leverage the contextual capabilities of the Salesforce platform without a traditional user interface flow. This method enables the Heroku service to programmatically access and interact with Salesforce Org functionalities, such as leveraging Agentforce and pre-configured topics, thereby enhancing your Heroku application with advanced Agentforce capabilities.

Secure microservices and CI/CD automation with AppLink

For developers and the IT leaders supporting them, the enhancement of AppLink with JWT authorization delivers two non-negotiable requirements for the connected enterprise.

- Consistent automation for Continuous Delivery: You can now eliminate manual steps and ensure a consistent, repeatable deployment process within your CI/CD pipeline.

- Defense against credentials theft: By leveraging JWTs and on-demand tokens, your application gains a more secure mechanism for communication.

To get started with AppLink’s new JWT authorization features and learn how to implement them in your microservices, check out the AppLink Documentation and the JWT Authorization Setup Guide.

The post Heroku AppLink: Now Using JWT-Based Authorization for Salesforce appeared first on Heroku.

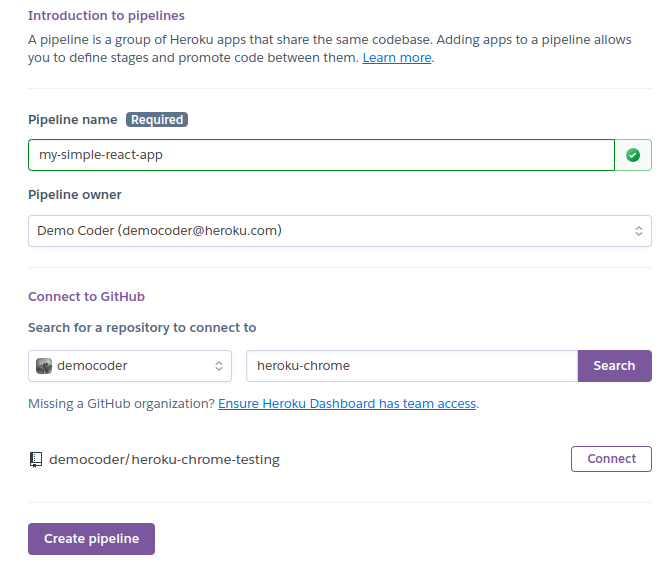

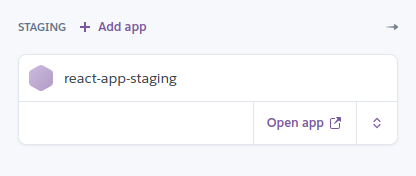

]]>We’re excited to announce a significant enhancement to how Heroku Enterprise customers connect their deployment pipelines to GitHub Enterprise Server (GHES) and GitHub Enterprise Cloud (GHEC). The new Heroku GitHub Enterprise Integration is now available in a closed pilot, offering a more secure, robust, and permanent connection between your code repositories and your Heroku apps. […]

The post Heroku GitHub Enterprise Integration: Unlocking Full Continuous Delivery for Enterprise Customers appeared first on Heroku.

]]>We’re excited to announce a significant enhancement to how Heroku Enterprise customers connect their deployment pipelines to GitHub Enterprise Server (GHES) and GitHub Enterprise Cloud (GHEC). The new Heroku GitHub Enterprise Integration is now available in a closed pilot, offering a more secure, robust, and permanent connection between your code repositories and your Heroku apps.

This integration removes the final barrier preventing large enterprise customers from accessing our core continuous delivery features. By enabling a secure, permanent, app-based service identity, this integration now fully supports the use of Heroku Pipelines for automated, safe deployments and instantly accessible Review Apps for every feature branch. This ensures that developers at the world’s largest companies can finally utilize Heroku’s best-in-class workflow—deploying code consistently, automatically, and confidently from their preferred industry-standard version control system, all without being blocked by complex enterprise security or personnel turnover issues.

Scaling delivery: moving beyond personal credentials

Historically, connecting Heroku to GitHub Enterprise Server (GHES) and GitHub Enterprise Cloud (GHEC) relied on individual user credentials, typically in the form of Personal OAuth Tokens. While functional, this method presents critical friction for large organizations:

- Security mandates: Personal tokens often have broad permissions and conflict with stringent enterprise policies that demand a least privilege access model.

- Operational risk: If the specific user who set up the deployment pipeline leaves the organization or has their access revoked, the entire CI/CD process breaks down. This risk introduces fragility into mission-critical workflows.

- Maintenance burden: IT teams are forced to create and maintain separate “service accounts” or bot users purely to manage the connection, adding unnecessary complexity.

Heroku GitHub Enterprise Integration: dedicated service identity via GitHub App

This new integration is the recommended, next-generation method for connecting your Heroku Enterprise account to your organization’s GitHub environment (whether GitHub Enterprise Server or GitHub Enterprise Cloud).

Instead of relying on an individual user’s credentials (the traditional personal OAuth tokens) this feature uses a dedicated GitHub App. The GitHub App acts as a service identity, allowing Heroku to interact with your repositories on its own behalf.

This shift in authentication provides crucial advantages for enterprise security and stability by decoupling your deployment process from any single user account.

Key benefits of Heroku GitHub Enterprise Integration

This new architecture addresses critical enterprise needs, providing major improvements over the previous Heroku GitHub Deploys method:

Enhanced security and granular control

Unlike personal OAuth tokens, which grant access based on a user’s role, the integration leverages the inherent security model of GitHub Apps:

- Decoupled authentication: The GitHub App acts as a resilient, dedicated service identity, allowing Heroku to interact with your repositories on its own behalf. This decouples your deployment process from any single user account.

- Least privilege: You gain granular control over the permissions granted to Heroku, ensuring the integration can only access the specific repositories and perform the necessary deployment actions.

Deployment stability and team resilience

Increased operational stability for enterprise-grade application development.

- No pipeline breakage: Because the GitHub App owns the connection, your continuous integration and delivery (CI/CD) pipelines will not break if the original configuring user leaves your organization or has their access revoked. This ensures business continuity regardless of personnel changes.

- Zero service accounts: This integration automatically serves the function of a service account , eliminating the need to create and maintain a separate bot user for connectivity.

Unlocking the Full Power of Heroku Continuous Delivery

By establishing this robust, resilient service identity, the integration ensures that core continuous delivery features function reliably for both GitHub Enterprise Cloud (GHEC) and GitHub Enterprise Server (GHES) organizations:

- Full Heroku pipelines functionality: Core features like automated deploys and environment promotions now function seamlessly and reliably. You can consistently deploy code automatically and confidently from your preferred version control system.

- Instantly accessible Review Apps: Every feature branch now instantly receives an accessible Review App. This enables instant, isolated testing for QA and product managers, accelerating your time-to-market.

- Consistent support for all enterprise environments: The integration fully supports both on-premises GHES and the cloud-based GHEC.